🗺️ HPC series — you are here

1. HPC Cluster basics — SSH, file transfer, Micromamba, Slurm, dependencies 2. Resequencing pipeline on HPC — BWA-MEM2 + GATK, variant calling 3. ChIP-Seq pipeline on HPC — minimap2 + MACS3, peak calling 4. 🔵 RNA-Seq pipeline (this page) — STAR + featureCounts, DE analysis

✅ Before you start — pre-class checklist

- You can

ssh <netid>@pronghorn.rc.unr.eduand see the Pronghorn promptsacctmgr show user $USER withassocshows accountcpu-s5-bch709-6/ partitioncpu-core-0~/scratchsymlink exists and points under/data/gpfs/assoc/bch709-6/<netid>micromamba --versionworks on the login node (shell hook in~/.bashrc)- You ran the laptop version of RNA-Seq tutorial at least once so the biology makes sense

Any box unchecked? → go back to the HPC Cluster lesson first.

Using Pronghorn (High-Performance Computing)

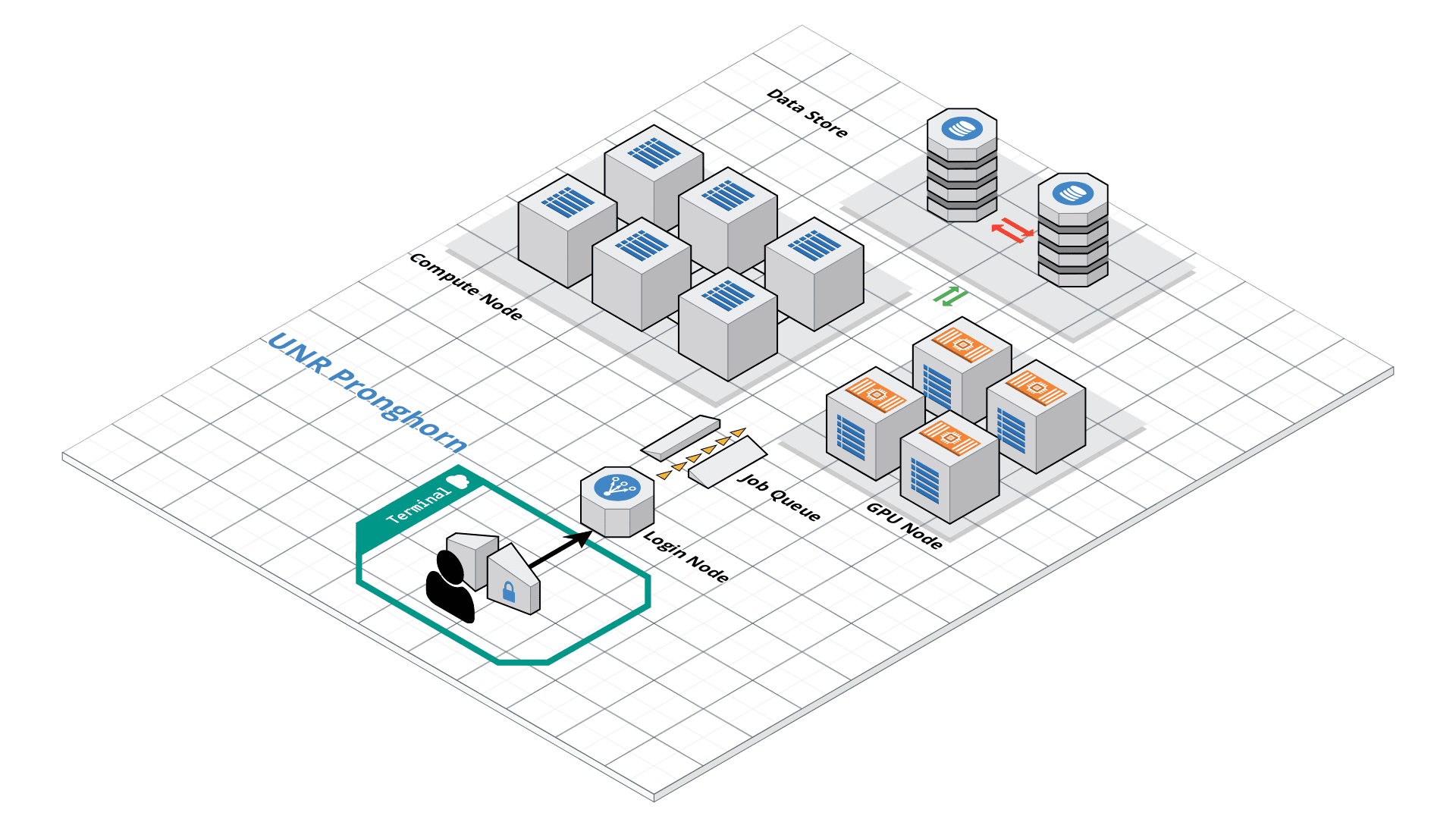

Pronghorn is the University of Nevada, Reno’s new High-Performance Computing (HPC) cluster. The GPU-accelerated system is designed, built and maintained by the Office of Information Technology’s HPC Team. Pronghorn and the HPC Team supports general research across the Nevada System of Higher Education (NSHE).

Pronghorn is composed of CPU, GPU, and Storage subsystems interconnected by a 100Gb/s non-blocking Intel Omni-Path fabric. The CPU partition features 93 nodes, 2,976 CPU cores, and 21TiB of memory. The GPU partition features 44 NVIDIA Tesla P100 GPUs, 352 CPU cores, and 2.75TiB of memory. The storage system uses the IBM SpectrumScale file system to provide 1PB of high-performance storage. The computational and storage capabilities of Pronghorn will regularly expand to meet NSHE computing demands.

Pronghorn is collocated at the Switch Citadel Campus located 25 miles East of the University of Nevada, Reno. Switch is the definitive leader of sustainable data center design and operation. The Switch Citadel is rated Tier 5 Platinum, and will be the largest, most advanced data center campus on the planet.

Slurm Start Tutorial

Resource sharing on a supercomputer dedicated to technical and/or scientific computing is often organized by a piece of software called a resource manager or job scheduler. Users submit jobs, which are scheduled and allocated resources (CPU time, memory, etc.) by the resource manager.

Slurm is a resource manager and job scheduler designed to do just that, and much more. It was originally created by people at the Livermore Computing Center, and has grown into a full-fledge open-source software backed up by a large community, commercially supported by the original developers, and installed in many of the Top500 supercomputers.

Gathering information Slurm offers many commands you can use to interact with the system. For instance, the sinfo command gives an overview of the resources offered by the cluster, while the squeue command shows to which jobs those resources are currently allocated.

By default, sinfo lists the partitions that are available. A partition is a set of compute nodes (computers dedicated to… computing) grouped logically. Typical examples include partitions dedicated to batch processing, debugging, post processing, or visualization.

sinfo

sinfo

PARTITION AVAIL TIMELIMIT NODES STATE NODELIST

cpu-s2-core-0 up 14-00:00:0 2 mix cpu-[8-9]

cpu-s2-core-0 up 14-00:00:0 7 alloc cpu-[1-2,4-6,78-79]

cpu-s2-core-0 up 14-00:00:0 44 idle cpu-[0,3,7,10-47,64,76-77]

cpu-s3-core-0* up 2:00:00 2 mix cpu-[8-9]

cpu-s3-core-0* up 2:00:00 7 alloc cpu-[1-2,4-6,78-79]

cpu-s3-core-0* up 2:00:00 44 idle cpu-[0,3,7,10-47,64,76-77]

gpu-s2-core-0 up 14-00:00:0 11 idle gpu-[0-10]

cpu-s6-core-0 up 15:00 2 idle cpu-[65-66]

cpu-s1-pgl-0 up 14-00:00:0 1 mix cpu-49

cpu-s1-pgl-0 up 14-00:00:0 1 alloc cpu-48

cpu-s1-pgl-0 up 14-00:00:0 2 idle cpu-[50-51]

In the above example, we see two partitions, named batch and debug. The latter is the default partition as it is marked with an asterisk. All nodes of the debug partition are idle, while two of the batch partition are being used.

The sinfo command also lists the time limit (column TIMELIMIT) to which jobs are subject. On every cluster, jobs are limited to a maximum run time, to allow job rotation and let every user a chance to see their job being started. Generally, the larger the cluster, the smaller the maximum allowed time. You can find the details on the cluster page.

You can actually specify precisely what information you would like sinfo to output by using its –format argument. For more details, have a look at the command manpage with man sinfo.

squeue

The squeue command shows the list of jobs which are currently running (they are in the RUNNING state, noted as ‘R’) or waiting for resources (noted as ‘PD’, short for PENDING).

squeue

JOBID PARTITION NAME USER ST TIME NODES NODELIST(REASON)

983204 cpu-s2-co neb_K jzhang23 R 6-09:05:47 1 cpu-6

983660 cpu-s2-co RT3.sl yinghanc R 12:56:17 1 cpu-9

983659 cpu-s2-co RT4.sl yinghanc R 12:56:21 1 cpu-8

983068 cpu-s2-co Gd-bound dcantu R 7-06:16:01 2 cpu-[78-79]

983067 cpu-s2-co Gd-unbou dcantu R 1-17:41:56 2 cpu-[1-2]

983472 cpu-s2-co ub-all dcantu R 3-10:05:01 2 cpu-[4-5]

982604 cpu-s1-pg wrap wyim R 12-14:35:23 1 cpu-49

983585 cpu-s1-pg wrap wyim R 1-06:28:29 1 cpu-48

983628 cpu-s1-pg wrap wyim R 13:44:46 1 cpu-49

Text editor

SBATCH

Now the question is: How do you create a job?

A job consists in two parts: resource requests and job steps. Resource requests consist in a number of CPUs, computing expected duration, amounts of RAM or disk space, etc. Job steps describe tasks that must be done, software which must be run.

The typical way of creating a job is to write a submission script. A submission script is a shell script, e.g. a Bash script, whose comments, if they are prefixed with SBATCH, are understood by Slurm as parameters describing resource requests and other submissions options. You can get the complete list of parameters from the sbatch manpage man sbatch.

Important

The SBATCH directives must appear at the top of the submission file, before any other line except for the very first line which should be the shebang (e.g. #!/bin/bash). The script itself is a job step. Other job steps are created with the srun command. For instance, the following script, hypothetically named submit.sh,

nano submit.sh

#!/bin/bash

#SBATCH --job-name=test

#SBATCH --mail-type=all

#SBATCH --mail-user=<YOUR_EMAIL>

#SBATCH --ntasks=1

#SBATCH --mem-per-cpu=1g

#SBATCH --time=8:10:00

#SBATCH --account=cpu-s5-bch709-6

#SBATCH --partition=cpu-core-0

#SBATCH -o test_%j.out

for i in {1..1000};

do

echo $i;

sleep 1;

done

would request one CPU for 10 minutes, along with 1g of RAM, in the default queue. When started, the job would run a first job step srun hostname, which will launch the UNIX command hostname on the node on which the requested CPU was allocated. Then, a second job step will start the sleep command. Note that the –job-name parameter allows giving a meaningful name to the job and the –output parameter defines the file to which the output of the job must be sent.

Once the submission script is written properly, you need to submit it to slurm through the sbatch command, which, upon success, responds with the jobid attributed to the job. (The dollar sign below is the shell prompt)

chmod 775 submit.sh

sbatch submit.sh

sbatch: Submitted batch job 99999999

How to cancel the job?

scancel <JOB ID>

Scratch disk space — already set up

You already created ~/scratch (symlink to /data/gpfs/assoc/bch709-6/<your_netid>) in the HPC Cluster lesson. All paths in this lesson use ~/scratch directly. If ls -la ~/scratch doesn’t show a symlink to your scratch directory, go back and create it first.

Importing Data from the NCBI Sequence Read Archive (SRA) using the DE

Working path

cd ~/scratch

pwd

Micromamba environment

We use Micromamba for package management on Pronghorn — see the HPC Cluster lesson for installation.

micromamba create -n RNASEQ_bch709 -c conda-forge -c bioconda python=3.11 -y

micromamba activate RNASEQ_bch709

micromamba install -c conda-forge -c bioconda \

minimap2 star 'samtools>=1.20' subread \

openjdk=17 'trinity>=2.15' gffread seqkit kraken2 'fastp>=0.24' \

perl-dbi perl-dbd-sqlite perl-html-parser \

pandas numpy -y

# NOTE 1: `perl-bioperl` is intentionally NOT installed. Its current bioconda

# build pins libzlib<1.3, which conflicts with modern samtools/Trinity/kraken2.

# Trinity assembly itself does not need BioPerl — it is only required by a

# few legacy auxiliary scripts. If you ever need BioPerl, install it later

# in a SEPARATE env: `micromamba create -n bioperl -c bioconda perl-bioperl`

# NOTE 2: we deliberately do NOT install `sra-tools`. Bioconda's sra-tools 3.x

# is built against GLIBC 2.27+, newer than Pronghorn's system libc — so

# `prefetch` / `fastq-dump` crash on the compute nodes with

# "GLIBC_2.27 not found". The download steps below pull FASTQ from ENA over

# HTTPS with `curl`, which works regardless of the system GLIBC.

# Upgrade pip first — older pip can't find the prebuilt `tiktoken`

# manylinux wheel (a transitive multiqc dep), tries to build it from

# Rust source, fails on Pronghorn (no Rust compiler).

pip install --upgrade pip

# MultiQC + pinned deps. `tiktoken<0.8` is the safety pin — older

# tiktoken has stable cp311 linux wheels.

pip install --prefer-binary \

'numpy<2.0' 'pyarrow<17' 'tiktoken<0.8' multiqc

Patch libcrypto so samtools runs (do this now, not after it crashes):

Bioconda’s samtools is linked against libcrypto.so.1.0.0, but the current OpenSSL package in the env ships libcrypto.so.3 (or .1.1). Without a symlink you get:

samtools: error while loading shared libraries: libcrypto.so.1.0.0: cannot open shared object file

Create the symlink once, right after activating:

# Env must be ACTIVE so $CONDA_PREFIX points at the env folder

cd "$CONDA_PREFIX/lib"

if [ -f libcrypto.so.1.1 ]; then ln -sf libcrypto.so.1.1 libcrypto.so.1.0.0

elif [ -f libcrypto.so.3 ]; then ln -sf libcrypto.so.3 libcrypto.so.1.0.0

fi

cd - > /dev/null

# Verify

samtools --version | head -1 # → samtools 1.xx (no libcrypto error)

If samtools --version prints a version, you’re done. If it still errors, ask the instructor before moving on.

SRA

Sequence Read Archive (SRA) data, available through multiple cloud providers and NCBI servers, is the largest publicly available repository of high throughput sequencing data. The archive accepts data from all branches of life as well as metagenomic and environmental surveys.

Searching the SRA: Searching the SRA can be complicated. Often a paper or reference will specify the accession number(s) connected to a dataset. You can search flexibly using a number of terms (such as the organism name) or the filters (e.g. DNA vs. RNA). The SRA Help Manual provides several useful explanations. It is important to know is that projects are organized and related at several levels, and some important terms include:

Bioproject: A BioProject is a collection of biological data related to a single initiative, originating from a single organization or from a consortium of coordinating organizations; see for example Bio Project 272719 Bio Sample: A description of the source materials for a project Run: These are the actual sequencing runs (usually starting with SRR); see for example SRR1761506

Publication (Arabidopsis)

SRA Bioproject site

https://www.ncbi.nlm.nih.gov/bioproject/PRJNA272719

Runinfo

| Run | ReleaseDate | LoadDate | spots | bases | spots_with_mates | avgLength | size_MB | AssemblyName | download_path | Experiment | LibraryName | LibraryStrategy | LibrarySelection | LibrarySource | LibraryLayout | InsertSize | InsertDev | Platform | Model | SRAStudy | BioProject | Study_Pubmed_id | ProjectID | Sample | BioSample | SampleType | TaxID | ScientificName | SampleName | g1k_pop_code | source | g1k_analysis_group | Subject_ID | Sex | Disease | Tumor | Affection_Status | Analyte_Type | Histological_Type | Body_Site | CenterName | Submission | dbgap_study_accession | Consent | RunHash | ReadHash |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| SRR1761506 | 1/15/2016 15:51 | 1/15/2015 12:43 | 7379945 | 1490748890 | 7379945 | 202 | 899 | https://sra-downloadb.be-md.ncbi.nlm.nih.gov/sos1/sra-pub-run-5/SRR1761506/SRR1761506.1 | SRX844600 | RNA-Seq | cDNA | TRANSCRIPTOMIC | PAIRED | 0 | 0 | ILLUMINA | Illumina HiSeq 2500 | SRP052302 | PRJNA272719 | 3 | 272719 | SRS820503 | SAMN03285048 | simple | 3702 | Arabidopsis thaliana | GSM1585887 | no | GEO | SRA232612 | public | F335FB96DDD730AC6D3AE4F6683BF234 | 12818EB5275BCB7BCB815E147BFD0619 | |||||||||||||

| SRR1761507 | 1/15/2016 15:51 | 1/15/2015 12:43 | 9182965 | 1854958930 | 9182965 | 202 | 1123 | https://sra-downloadb.be-md.ncbi.nlm.nih.gov/sos1/sra-pub-run-5/SRR1761507/SRR1761507.1 | SRX844601 | RNA-Seq | cDNA | TRANSCRIPTOMIC | PAIRED | 0 | 0 | ILLUMINA | Illumina HiSeq 2500 | SRP052302 | PRJNA272719 | 3 | 272719 | SRS820504 | SAMN03285045 | simple | 3702 | Arabidopsis thaliana | GSM1585888 | no | GEO | SRA232612 | public | 00FD62759BF7BBAEF123BF5960B2A616 | A61DCD3B96AB0796AB5E969F24F81B76 | |||||||||||||

| SRR1761508 | 1/15/2016 15:51 | 1/15/2015 12:47 | 19060611 | 3850243422 | 19060611 | 202 | 2324 | https://sra-downloadb.be-md.ncbi.nlm.nih.gov/sos1/sra-pub-run-5/SRR1761508/SRR1761508.1 | SRX844602 | RNA-Seq | cDNA | TRANSCRIPTOMIC | PAIRED | 0 | 0 | ILLUMINA | Illumina HiSeq 2500 | SRP052302 | PRJNA272719 | 3 | 272719 | SRS820505 | SAMN03285046 | simple | 3702 | Arabidopsis thaliana | GSM1585889 | no | GEO | SRA232612 | public | B75A3E64E88B1900102264522D2281CB | 657987ABC8043768E99BD82947608CAC | |||||||||||||

| SRR1761509 | 1/15/2016 15:51 | 1/15/2015 12:51 | 16555739 | 3344259278 | 16555739 | 202 | 2016 | https://sra-downloadb.be-md.ncbi.nlm.nih.gov/sos1/sra-pub-run-5/SRR1761509/SRR1761509.1 | SRX844603 | RNA-Seq | cDNA | TRANSCRIPTOMIC | PAIRED | 0 | 0 | ILLUMINA | Illumina HiSeq 2500 | SRP052302 | PRJNA272719 | 3 | 272719 | SRS820506 | SAMN03285049 | simple | 3702 | Arabidopsis thaliana | GSM1585890 | no | GEO | SRA232612 | public | 27CA2B82B69EEF56EAF53D3F464EEB7B | 2B56CA09F3655F4BBB412FD2EE8D956C | |||||||||||||

| SRR1761510 | 1/15/2016 15:51 | 1/15/2015 12:46 | 12700942 | 2565590284 | 12700942 | 202 | 1552 | https://sra-downloadb.be-md.ncbi.nlm.nih.gov/sos1/sra-pub-run-5/SRR1761510/SRR1761510.1 | SRX844604 | RNA-Seq | cDNA | TRANSCRIPTOMIC | PAIRED | 0 | 0 | ILLUMINA | Illumina HiSeq 2500 | SRP052302 | PRJNA272719 | 3 | 272719 | SRS820508 | SAMN03285050 | simple | 3702 | Arabidopsis thaliana | GSM1585891 | no | GEO | SRA232612 | public | D3901795C7ED74B8850480132F4688DA | 476A9484DCFCF9FFFDAADAAF4CE5D0EA | |||||||||||||

| SRR1761511 | 1/15/2016 15:51 | 1/15/2015 12:44 | 13353992 | 2697506384 | 13353992 | 202 | 1639 | https://sra-downloadb.be-md.ncbi.nlm.nih.gov/sos1/sra-pub-run-5/SRR1761511/SRR1761511.1 | SRX844605 | RNA-Seq | cDNA | TRANSCRIPTOMIC | PAIRED | 0 | 0 | ILLUMINA | Illumina HiSeq 2500 | SRP052302 | PRJNA272719 | 3 | 272719 | SRS820507 | SAMN03285047 | simple | 3702 | Arabidopsis thaliana | GSM1585892 | no | GEO | SRA232612 | public | 5078379601081319FCBF67C7465C404A | E3B4195AFEA115ACDA6DEF6E4AA7D8DF | |||||||||||||

| SRR1761512 | 1/15/2016 15:51 | 1/15/2015 12:44 | 8134575 | 1643184150 | 8134575 | 202 | 1067 | https://sra-downloadb.be-md.ncbi.nlm.nih.gov/sos1/sra-pub-run-5/SRR1761512/SRR1761512.1 | SRX844606 | RNA-Seq | cDNA | TRANSCRIPTOMIC | PAIRED | 0 | 0 | ILLUMINA | Illumina HiSeq 2500 | SRP052302 | PRJNA272719 | 3 | 272719 | SRS820509 | SAMN03285051 | simple | 3702 | Arabidopsis thaliana | GSM1585893 | no | GEO | SRA232612 | public | DDB8F763B71B1E29CC9C1F4C53D88D07 | 8F31604D3A4120A50B2E49329A786FA6 | |||||||||||||

| SRR1761513 | 1/15/2016 15:51 | 1/15/2015 12:43 | 7333641 | 1481395482 | 7333641 | 202 | 960 | https://sra-downloadb.be-md.ncbi.nlm.nih.gov/sos1/sra-pub-run-5/SRR1761513/SRR1761513.1 | SRX844607 | RNA-Seq | cDNA | TRANSCRIPTOMIC | PAIRED | 0 | 0 | ILLUMINA | Illumina HiSeq 2500 | SRP052302 | PRJNA272719 | 3 | 272719 | SRS820510 | SAMN03285053 | simple | 3702 | Arabidopsis thaliana | GSM1585894 | no | GEO | SRA232612 | public | 4068AE245EB0A81DFF02889D35864AF2 | 8E05C4BC316FBDFEBAA3099C54E7517B | |||||||||||||

| SRR1761514 | 1/15/2016 15:51 | 1/15/2015 12:44 | 6160111 | 1244342422 | 6160111 | 202 | 807 | https://sra-downloadb.be-md.ncbi.nlm.nih.gov/sos1/sra-pub-run-5/SRR1761514/SRR1761514.1 | SRX844608 | RNA-Seq | cDNA | TRANSCRIPTOMIC | PAIRED | 0 | 0 | ILLUMINA | Illumina HiSeq 2500 | SRP052302 | PRJNA272719 | 3 | 272719 | SRS820511 | SAMN03285059 | simple | 3702 | Arabidopsis thaliana | GSM1585895 | no | GEO | SRA232612 | public | 0A1F3E9192E7F9F4B3758B1CE514D264 | 81BFDB94C797624B34AFFEB554CE4D98 | |||||||||||||

| SRR1761515 | 1/15/2016 15:51 | 1/15/2015 12:44 | 7988876 | 1613752952 | 7988876 | 202 | 1048 | https://sra-downloadb.be-md.ncbi.nlm.nih.gov/sos1/sra-pub-run-5/SRR1761515/SRR1761515.1 | SRX844609 | RNA-Seq | cDNA | TRANSCRIPTOMIC | PAIRED | 0 | 0 | ILLUMINA | Illumina HiSeq 2500 | SRP052302 | PRJNA272719 | 3 | 272719 | SRS820512 | SAMN03285054 | simple | 3702 | Arabidopsis thaliana | GSM1585896 | no | GEO | SRA232612 | public | 39B37A0BD484C736616C5B0A45194525 | 85B031D74DF90AD1815AA1BBBF1F12BD | |||||||||||||

| SRR1761516 | 1/15/2016 15:51 | 1/15/2015 12:44 | 8770090 | 1771558180 | 8770090 | 202 | 1152 | https://sra-downloadb.be-md.ncbi.nlm.nih.gov/sos1/sra-pub-run-5/SRR1761516/SRR1761516.1 | SRX844610 | RNA-Seq | cDNA | TRANSCRIPTOMIC | PAIRED | 0 | 0 | ILLUMINA | Illumina HiSeq 2500 | SRP052302 | PRJNA272719 | 3 | 272719 | SRS820514 | SAMN03285055 | simple | 3702 | Arabidopsis thaliana | GSM1585897 | no | GEO | SRA232612 | public | E4728DFBF0F9F04B89A5B041FA570EB3 | B96545CB9C4C3EE1C9F1E8B3D4CE9D24 | |||||||||||||

| SRR1761517 | 1/15/2016 15:51 | 1/15/2015 12:44 | 8229157 | 1662289714 | 8229157 | 202 | 1075 | https://sra-downloadb.be-md.ncbi.nlm.nih.gov/sos1/sra-pub-run-5/SRR1761517/SRR1761517.1 | SRX844611 | RNA-Seq | cDNA | TRANSCRIPTOMIC | PAIRED | 0 | 0 | ILLUMINA | Illumina HiSeq 2500 | SRP052302 | PRJNA272719 | 3 | 272719 | SRS820513 | SAMN03285058 | simple | 3702 | Arabidopsis thaliana | GSM1585898 | no | GEO | SRA232612 | public | C05BC519960B075038834458514473EB | 4EF7877FC59FF5214DBF2E2FE36D67C5 | |||||||||||||

| SRR1761518 | 1/15/2016 15:51 | 1/15/2015 12:44 | 8760931 | 1769708062 | 8760931 | 202 | 1072 | https://sra-downloadb.be-md.ncbi.nlm.nih.gov/sos1/sra-pub-run-5/SRR1761518/SRR1761518.1 | SRX844612 | RNA-Seq | cDNA | TRANSCRIPTOMIC | PAIRED | 0 | 0 | ILLUMINA | Illumina HiSeq 2500 | SRP052302 | PRJNA272719 | 3 | 272719 | SRS820515 | SAMN03285052 | simple | 3702 | Arabidopsis thaliana | GSM1585899 | no | GEO | SRA232612 | public | 7D8333182062545CECD5308A222FF506 | 382F586C4BF74E474D8F9282E36BE4EC | |||||||||||||

| SRR1761519 | 1/15/2016 15:51 | 1/15/2015 12:44 | 6643107 | 1341907614 | 6643107 | 202 | 811 | https://sra-downloadb.be-md.ncbi.nlm.nih.gov/sos1/sra-pub-run-5/SRR1761519/SRR1761519.1 | SRX844613 | RNA-Seq | cDNA | TRANSCRIPTOMIC | PAIRED | 0 | 0 | ILLUMINA | Illumina HiSeq 2500 | SRP052302 | PRJNA272719 | 3 | 272719 | SRS820516 | SAMN03285056 | simple | 3702 | Arabidopsis thaliana | GSM1585900 | no | GEO | SRA232612 | public | 163BD8073D7E128D8AD1B253A722DD08 | DFBCC891EB5FA97490E32935E54C9E14 | |||||||||||||

| SRR1761520 | 1/15/2016 15:51 | 1/15/2015 12:44 | 8506472 | 1718307344 | 8506472 | 202 | 1040 | https://sra-downloadb.be-md.ncbi.nlm.nih.gov/sos1/sra-pub-run-5/SRR1761520/SRR1761520.1 | SRX844614 | RNA-Seq | cDNA | TRANSCRIPTOMIC | PAIRED | 0 | 0 | ILLUMINA | Illumina HiSeq 2500 | SRP052302 | PRJNA272719 | 3 | 272719 | SRS820517 | SAMN03285062 | simple | 3702 | Arabidopsis thaliana | GSM1585901 | no | GEO | SRA232612 | public | 791BD0D8840AA5F1D74E396668638DA1 | AF4694425D34F84095F6CFD6F4A09936 | |||||||||||||

| SRR1761521 | 1/15/2016 15:51 | 1/15/2015 12:46 | 13166085 | 2659549170 | 13166085 | 202 | 1609 | https://sra-downloadb.be-md.ncbi.nlm.nih.gov/sos1/sra-pub-run-5/SRR1761521/SRR1761521.1 | SRX844615 | RNA-Seq | cDNA | TRANSCRIPTOMIC | PAIRED | 0 | 0 | ILLUMINA | Illumina HiSeq 2500 | SRP052302 | PRJNA272719 | 3 | 272719 | SRS820518 | SAMN03285057 | simple | 3702 | Arabidopsis thaliana | GSM1585902 | no | GEO | SRA232612 | public | 47C40480E9B7DB62B4BEE0F2193D16B3 | 1443C58A943C07D3275AB12DC31644A9 | |||||||||||||

| SRR1761522 | 1/15/2016 15:51 | 1/15/2015 12:49 | 9496483 | 1918289566 | 9496483 | 202 | 1162 | https://sra-downloadb.be-md.ncbi.nlm.nih.gov/sos1/sra-pub-run-5/SRR1761522/SRR1761522.1 | SRX844616 | RNA-Seq | cDNA | TRANSCRIPTOMIC | PAIRED | 0 | 0 | ILLUMINA | Illumina HiSeq 2500 | SRP052302 | PRJNA272719 | 3 | 272719 | SRS820519 | SAMN03285061 | simple | 3702 | Arabidopsis thaliana | GSM1585903 | no | GEO | SRA232612 | public | BB05DF11E1F95427530D69DB5E0FA667 | 7706862FB2DF957E4041D2064A691CF6 | |||||||||||||

| SRR1761523 | 1/15/2016 15:51 | 1/15/2015 12:46 | 14999315 | 3029861630 | 14999315 | 202 | 1832 | https://sra-downloadb.be-md.ncbi.nlm.nih.gov/sos1/sra-pub-run-5/SRR1761523/SRR1761523.1 | SRX844617 | RNA-Seq | cDNA | TRANSCRIPTOMIC | PAIRED | 0 | 0 | ILLUMINA | Illumina HiSeq 2500 | SRP052302 | PRJNA272719 | 3 | 272719 | SRS820520 | SAMN03285060 | simple | 3702 | Arabidopsis thaliana | GSM1585904 | no | GEO | SRA232612 | public | 101D3A151E632224C09A702BD2F59CF5 | 0AC99FAA6B8941F89FFCBB8B1910696E |

Subset of data

| Sample information | Run |

|---|---|

| WT_rep1 | SRR1761506 |

| WT_rep2 | SRR1761507 |

| WT_rep3 | SRR1761508 |

| ABA_rep1 | SRR1761509 |

| ABA_rep2 | SRR1761510 |

| ABA_rep3 | SRR1761511 |

🔁 Already ran fastq-dump + trim in HPC_cluster?

The HPC Cluster lesson Workflow Step 1·2 downloads the same Arabidopsis SRR1761506-511 dataset and trims with fastp into the shared workspace at

~/scratch/rnaseq/raw_data/and~/scratch/rnaseq/trim/. To reuse those results here (no need to re-download or re-trim):# Sanity-check the shared workspace already has the trimmed reads ls ~/scratch/rnaseq/trim/SRR1761506_1.trimmed.fq.gz \ ~/scratch/rnaseq/trim/SRR1761506_2.trimmed.fq.gz # Set up the ATH project sub-directory under the shared parent mkdir -p ~/scratch/rnaseq/ATH cd ~/scratch/rnaseq/ATH ln -s ~/scratch/rnaseq/raw_data raw_data # reuse existing FASTQ ln -s ~/scratch/rnaseq/trim trim # reuse trimmed reads mkdir -p reference bam logs # only the new directories (logs/ for Slurm stdout)If the

lsabove prints both files, skip to “Reference downloads” below — STAR index + alignment is where this lesson really starts. Otherwise (fresh start, no HPC_cluster prerequisites done), follow the standard setup below.

mkdir -p ~/scratch/rnaseq/ATH

cd ~/scratch/rnaseq/ATH

mkdir -p raw_data trim reference bam logs

pwd

FASTQ download submission (from ENA)

Bioconda’s sra-tools 3.x crashes on Pronghorn (built against GLIBC 2.27+, newer than the system libc). We pull the same FASTQ from ENA, which mirrors every SRA run as ready-to-use .fastq.gz over HTTPS — no SRA toolkit needed.

cd ~/scratch/rnaseq/ATH

nano fastq-dump.sh

#!/bin/bash

#SBATCH --job-name=fastqdump_ATH

#SBATCH --cpus-per-task=2

#SBATCH --time=2-15:00:00

#SBATCH --mem=16g

#SBATCH --mail-type=all

#SBATCH --mail-user=<YOUR_EMAIL>

#SBATCH -o fastq-dump.out # STDOUT & STDERR

#SBATCH --account=cpu-s5-bch709-6

#SBATCH --partition=cpu-core-0

set -euo pipefail

mkdir -p ./raw_data

for SRR in SRR1761506 SRR1761507 SRR1761508 SRR1761509 SRR1761510 SRR1761511; do

URLS=$(curl -fsSL --retry 3 --max-time 60 \

"https://www.ebi.ac.uk/ena/portal/api/filereport?accession=${SRR}&result=read_run&fields=fastq_ftp&format=tsv" \

| tail -n +2 | awk -F'\t' '{print $NF}' | tr ';' '\n' | sed '/^$/d')

[ -n "${URLS}" ] || { echo "ERROR: ENA returned no fastq URLs for ${SRR}"; exit 1; }

for U in ${URLS}; do

OUT=./raw_data/$(basename "${U}")

[ -s "${OUT}" ] && { echo "[fastq] ${OUT} already present, skipping"; continue; }

echo "[fastq] ${SRR} -> https://${U}"

curl -fsSL --retry 3 --retry-delay 30 --max-time 3600 -o "${OUT}" "https://${U}"

done

done

Read Trimming with fastp

cd ~/scratch/rnaseq/ATH

nano trim.sh

#!/bin/bash

#SBATCH --job-name=trim_ATH

#SBATCH --cpus-per-task=2

#SBATCH --time=2-15:00:00

#SBATCH --mem=16g

#SBATCH --mail-type=all

#SBATCH --mail-user=<YOUR_EMAIL>

#SBATCH -o trim.out # STDOUT & STDERR

#SBATCH --account=cpu-s5-bch709-6

#SBATCH --partition=cpu-core-0

fastp --in1 raw_data/SRR1761506_1.fastq.gz --in2 raw_data/SRR1761506_2.fastq.gz --out1 trim/SRR1761506_1.trimmed.fq.gz --out2 trim/SRR1761506_2.trimmed.fq.gz --detect_adapter_for_pe --qualified_quality_phred 20 --length_required 50 --thread 2 --html trim/SRR1761506_fastp.html --json trim/SRR1761506_fastp.json

fastp --in1 raw_data/SRR1761507_1.fastq.gz --in2 raw_data/SRR1761507_2.fastq.gz --out1 trim/SRR1761507_1.trimmed.fq.gz --out2 trim/SRR1761507_2.trimmed.fq.gz --detect_adapter_for_pe --qualified_quality_phred 20 --length_required 50 --thread 2 --html trim/SRR1761507_fastp.html --json trim/SRR1761507_fastp.json

fastp --in1 raw_data/SRR1761508_1.fastq.gz --in2 raw_data/SRR1761508_2.fastq.gz --out1 trim/SRR1761508_1.trimmed.fq.gz --out2 trim/SRR1761508_2.trimmed.fq.gz --detect_adapter_for_pe --qualified_quality_phred 20 --length_required 50 --thread 2 --html trim/SRR1761508_fastp.html --json trim/SRR1761508_fastp.json

fastp --in1 raw_data/SRR1761509_1.fastq.gz --in2 raw_data/SRR1761509_2.fastq.gz --out1 trim/SRR1761509_1.trimmed.fq.gz --out2 trim/SRR1761509_2.trimmed.fq.gz --detect_adapter_for_pe --qualified_quality_phred 20 --length_required 50 --thread 2 --html trim/SRR1761509_fastp.html --json trim/SRR1761509_fastp.json

fastp --in1 raw_data/SRR1761510_1.fastq.gz --in2 raw_data/SRR1761510_2.fastq.gz --out1 trim/SRR1761510_1.trimmed.fq.gz --out2 trim/SRR1761510_2.trimmed.fq.gz --detect_adapter_for_pe --qualified_quality_phred 20 --length_required 50 --thread 2 --html trim/SRR1761510_fastp.html --json trim/SRR1761510_fastp.json

fastp --in1 raw_data/SRR1761511_1.fastq.gz --in2 raw_data/SRR1761511_2.fastq.gz --out1 trim/SRR1761511_1.trimmed.fq.gz --out2 trim/SRR1761511_2.trimmed.fq.gz --detect_adapter_for_pe --qualified_quality_phred 20 --length_required 50 --thread 2 --html trim/SRR1761511_fastp.html --json trim/SRR1761511_fastp.json

Reference downloads

We download the Arabidopsis TAIR10 genome and annotation directly from TAIR (www.arabidopsis.org). No JGI/Phytozome account required, no zip-bundle to unpack.

cd ~/scratch/rnaseq/ATH

mkdir -p bam reference logs

cd reference

pwd

Download Arabidopsis thaliana TAIR10 from TAIR

TAIR’s API serves the canonical TAIR10 chromosome FASTA and GFF3. The host uses a self-signed certificate, so we pass -k to curl (same as wget --no-check-certificate).

cd ~/scratch/rnaseq/ATH/reference

# Genome FASTA (gzipped, ~35 MB)

curl -kfsSL --retry 3 --max-time 600 \

-o TAIR10_chr_all.fas.gz \

"https://www.arabidopsis.org/api/download-files/download?filePath=Genes/TAIR10_genome_release/TAIR10_chromosome_files/TAIR10_chr_all.fas.gz"

gunzip -f TAIR10_chr_all.fas.gz

# Gene annotation (GFF3)

curl -kfsSL --retry 3 --max-time 600 \

-o TAIR10_GFF3_genes.gff \

"https://www.arabidopsis.org/api/download-files/download?filePath=Genes/TAIR10_genome_release/TAIR10_gff3/TAIR10_GFF3_genes.gff"

ls -lh TAIR10_chr_all.fas TAIR10_GFF3_genes.gff

seqkit stats TAIR10_chr_all.fas

Convert GFF to GTF

STAR’s --sjdbGTFfile expects GTF, so we convert the GFF3 with gffread:

cd ~/scratch/rnaseq/ATH/reference

gffread TAIR10_GFF3_genes.gff -T -F --keep-exon-attrs -o TAIR10_GFF3_genes.gtf

Create reference index

cd ~/scratch/rnaseq/ATH/reference

ls -algh

nano index.sh

#!/bin/bash

#SBATCH --job-name=index_ATH

#SBATCH --cpus-per-task=12

#SBATCH --time=2-15:00:00

#SBATCH --mem=48g

#SBATCH --mail-type=all

#SBATCH --mail-user=<YOUR_EMAIL>

#SBATCH -o index.out # STDOUT & STDERR

#SBATCH --account=cpu-s5-bch709-6

#SBATCH --partition=cpu-core-0

STAR --runThreadN 12 --runMode genomeGenerate --genomeDir . --genomeFastaFiles TAIR10_chr_all.fas --sjdbGTFfile TAIR10_GFF3_genes.gtf --sjdbOverhang 99 --genomeSAindexNbases 12

Mapping the reads to genome index

cd ~/scratch/rnaseq/ATH/

ls -algh

nano align.sh

#!/bin/bash

#SBATCH --job-name=align_ATH

#SBATCH --cpus-per-task=8

#SBATCH --time=2-15:00:00

#SBATCH --mem=32g

#SBATCH --mail-type=all

#SBATCH --mail-user=<YOUR_EMAIL>

#SBATCH -o align.out # STDOUT & STDERR

#SBATCH --account=cpu-s5-bch709-6

#SBATCH --partition=cpu-core-0

# NOTE: do NOT hard-code --dependency here. Pass it on the `sbatch` command line

# so the job ID is filled in automatically — see the "Submit the pipeline with

# dependency chaining" section below.

STAR --runMode alignReads --runThreadN 8 --readFilesCommand zcat --outFilterMultimapNmax 10 --alignIntronMin 25 --alignIntronMax 10000 --genomeDir ~/scratch/rnaseq/ATH/reference/ --readFilesIn ~/scratch/rnaseq/ATH/trim/SRR1761506_1.trimmed.fq.gz ~/scratch/rnaseq/ATH/trim/SRR1761506_2.trimmed.fq.gz --outSAMtype BAM SortedByCoordinate --outFileNamePrefix ~/scratch/rnaseq/ATH/bam/SRR1761506.bam

STAR --runMode alignReads --runThreadN 8 --readFilesCommand zcat --outFilterMultimapNmax 10 --alignIntronMin 25 --alignIntronMax 10000 --genomeDir ~/scratch/rnaseq/ATH/reference/ --readFilesIn ~/scratch/rnaseq/ATH/trim/SRR1761507_1.trimmed.fq.gz ~/scratch/rnaseq/ATH/trim/SRR1761507_2.trimmed.fq.gz --outSAMtype BAM SortedByCoordinate --outFileNamePrefix ~/scratch/rnaseq/ATH/bam/SRR1761507.bam

STAR --runMode alignReads --runThreadN 8 --readFilesCommand zcat --outFilterMultimapNmax 10 --alignIntronMin 25 --alignIntronMax 10000 --genomeDir ~/scratch/rnaseq/ATH/reference/ --readFilesIn ~/scratch/rnaseq/ATH/trim/SRR1761508_1.trimmed.fq.gz ~/scratch/rnaseq/ATH/trim/SRR1761508_2.trimmed.fq.gz --outSAMtype BAM SortedByCoordinate --outFileNamePrefix ~/scratch/rnaseq/ATH/bam/SRR1761508.bam

STAR --runMode alignReads --runThreadN 8 --readFilesCommand zcat --outFilterMultimapNmax 10 --alignIntronMin 25 --alignIntronMax 10000 --genomeDir ~/scratch/rnaseq/ATH/reference/ --readFilesIn ~/scratch/rnaseq/ATH/trim/SRR1761509_1.trimmed.fq.gz ~/scratch/rnaseq/ATH/trim/SRR1761509_2.trimmed.fq.gz --outSAMtype BAM SortedByCoordinate --outFileNamePrefix ~/scratch/rnaseq/ATH/bam/SRR1761509.bam

STAR --runMode alignReads --runThreadN 8 --readFilesCommand zcat --outFilterMultimapNmax 10 --alignIntronMin 25 --alignIntronMax 10000 --genomeDir ~/scratch/rnaseq/ATH/reference/ --readFilesIn ~/scratch/rnaseq/ATH/trim/SRR1761510_1.trimmed.fq.gz ~/scratch/rnaseq/ATH/trim/SRR1761510_2.trimmed.fq.gz --outSAMtype BAM SortedByCoordinate --outFileNamePrefix ~/scratch/rnaseq/ATH/bam/SRR1761510.bam

STAR --runMode alignReads --runThreadN 8 --readFilesCommand zcat --outFilterMultimapNmax 10 --alignIntronMin 25 --alignIntronMax 10000 --genomeDir ~/scratch/rnaseq/ATH/reference/ --readFilesIn ~/scratch/rnaseq/ATH/trim/SRR1761511_1.trimmed.fq.gz ~/scratch/rnaseq/ATH/trim/SRR1761511_2.trimmed.fq.gz --outSAMtype BAM SortedByCoordinate --outFileNamePrefix ~/scratch/rnaseq/ATH/bam/SRR1761511.bam

Counting reads with featureCounts — featureCounts.sh

Once every BAM is sorted by coordinate, count read pairs against the TAIR10 GTF. With subread ≥ 2.0.2, paired-end fragment counting requires both -p (paired-end) and --countReadPairs (count pairs as 1 rather than 2). The output ATH.featureCount.cnt is what the MultiQC and DESeq2/EdgeR steps below consume.

cd ~/scratch/rnaseq/ATH

nano featureCounts.sh

#!/bin/bash

#SBATCH --job-name=featurecounts_ATH

#SBATCH --cpus-per-task=8

#SBATCH --time=06:00:00

#SBATCH --mem=16g

#SBATCH --mail-type=FAIL,END

#SBATCH --mail-user=<YOUR_EMAIL>

#SBATCH -o logs/featurecounts_%j.out

#SBATCH --account=cpu-s5-bch709-6

#SBATCH --partition=cpu-core-0

set -euo pipefail

PROJECT=~/scratch/rnaseq/ATH

cd "$PROJECT/bam"

# Hard-coded SRR list — matches the cohort used in fastq-dump.sh / align.sh above.

BAMS="SRR1761506.bamAligned.sortedByCoord.out.bam \

SRR1761507.bamAligned.sortedByCoord.out.bam \

SRR1761508.bamAligned.sortedByCoord.out.bam \

SRR1761509.bamAligned.sortedByCoord.out.bam \

SRR1761510.bamAligned.sortedByCoord.out.bam \

SRR1761511.bamAligned.sortedByCoord.out.bam"

featureCounts \

-T 8 \

-p --countReadPairs \

-a "$PROJECT/reference/TAIR10_GFF3_genes.gtf" \

-o ATH.featureCount.cnt \

${BAMS}

Submit & inspect (standalone):

sbatch featureCounts.sh

# when done:

cat ~/scratch/rnaseq/ATH/bam/ATH.featureCount.cnt.summary

(run_all.sh below wires this in automatically — you don’t need to submit it by hand.)

Submit the entire pipeline with one script — run_all.sh

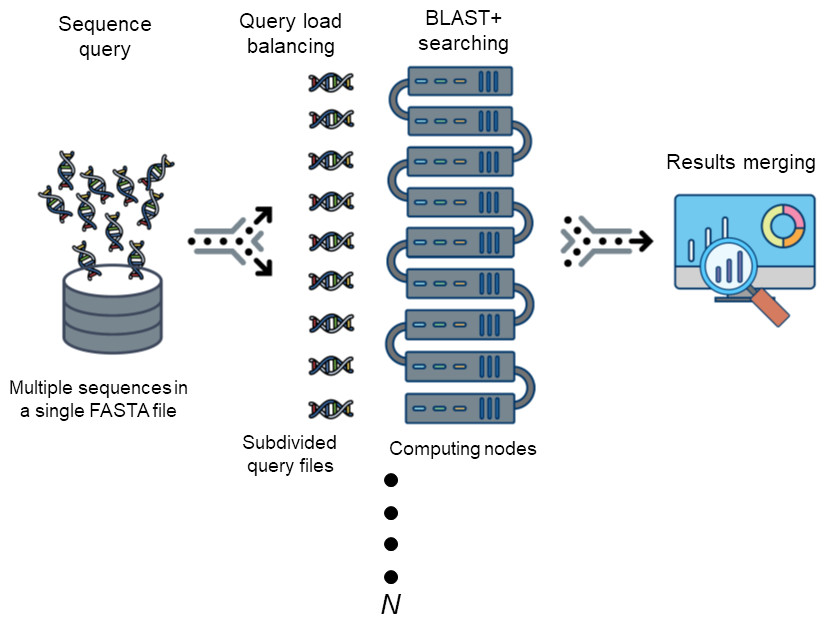

Rather than running each step by hand (submit → wait → submit → wait…), put every step into a driver script that submits them all at once. Slurm queues each job in the right order using --dependency; the whole pipeline runs unattended.

Pipeline DAG:

fastq-dump ──┐

├─→ trim ─→ align ─→ featureCounts ─→ multiqc

index ─────┘

(Download + index run in parallel; trim waits on download; align waits on both trim and index; featureCounts waits on align; multiqc waits on featureCounts.)

Save as run_all.sh:

#!/bin/bash

# run_all.sh — submit the entire Arabidopsis RNA-Seq pipeline with one command.

# Slurm enforces the correct order via --dependency; you can walk away.

set -euo pipefail

PROJECT=~/scratch/rnaseq/ATH

cd "$PROJECT"

# Activate the env in THIS shell so every sbatch below inherits the PATH

# (sbatch --export=ALL is the default — the submitted jobs see the same tools)

export MAMBA_ROOT_PREFIX="${MAMBA_ROOT_PREFIX:-$HOME/micromamba}"

eval "$(micromamba shell hook --shell=bash)"

micromamba activate RNASEQ_bch709

# 1. Download FASTQs (no prerequisites)

DUMP_JID=$(sbatch --parsable fastq-dump.sh)

# 2. Build STAR index (independent of download — runs in parallel)

IDX_JID=$(cd "$PROJECT/reference" && sbatch --parsable index.sh)

# 3. Trim reads (waits for download)

TRIM_JID=$(sbatch --parsable --dependency=afterok:${DUMP_JID} trim.sh)

# 4. Align to genome (waits for BOTH trim and index)

ALIGN_JID=$(sbatch --parsable --dependency=afterok:${TRIM_JID}:${IDX_JID} align.sh)

# 5. featureCounts (waits for align)

FC_JID=$(sbatch --parsable --dependency=afterok:${ALIGN_JID} featureCounts.sh)

# 6. MultiQC aggregation (waits for featureCounts; afterany lets it run even if FC partially failed)

MQC_JID=$(sbatch --parsable --dependency=afterany:${FC_JID} multiqc.sh)

cat <<EOF

Submitted RNA-Seq pipeline (Arabidopsis):

fastq-dump ${DUMP_JID}

index ${IDX_JID}

trim ${TRIM_JID}

align ${ALIGN_JID}

featureCounts ${FC_JID}

multiqc ${MQC_JID}

Monitor with: squeue -u \$USER

Cancel all: scancel ${DUMP_JID} ${IDX_JID} ${TRIM_JID} ${ALIGN_JID} ${FC_JID} ${MQC_JID}

Final report (after pipeline finishes): ~/scratch/rnaseq/ATH/qc/ATH_report.html

EOF

Run it:

chmod +x run_all.sh

bash run_all.sh

squeue -u $USER # later jobs show state PD with reason (Dependency)

You’ll see 4 job IDs printed immediately. Close your laptop — Slurm takes over. When everything finishes, check ls bam/ for sorted BAM outputs and log files for Finished successfully.

🧑💻 Hands-on walkthrough — submit the pipeline step-by-step

If you want to see exactly what run_all.sh does (or debug one step), submit each stage manually. Every sbatch returns a job ID that the next step depends on.

Do this first (login shell — one time):

micromamba activate RNASEQ_bch709

cd ~/scratch/rnaseq/ATH

Then submit each step — each line is one command:

# --- Step 1: download FASTQs (no prerequisites) ---

DUMP_JID=$(sbatch --parsable fastq-dump.sh)

echo "fastq-dump → $DUMP_JID"

# --- Step 2: build STAR index (no prerequisites, runs in parallel with Step 1) ---

IDX_JID=$(cd reference && sbatch --parsable index.sh)

echo "index → $IDX_JID"

# --- Step 3: trim (waits for fastq-dump) ---

TRIM_JID=$(sbatch --parsable --dependency=afterok:${DUMP_JID} trim.sh)

echo "trim → $TRIM_JID"

# --- Step 4: align (waits for BOTH trim and index) ---

ALIGN_JID=$(sbatch --parsable --dependency=afterok:${TRIM_JID}:${IDX_JID} align.sh)

echo "align → $ALIGN_JID"

# --- Step 5: featureCounts (waits for align) ---

FC_JID=$(sbatch --parsable --dependency=afterok:${ALIGN_JID} featureCounts.sh)

echo "featureCounts → $FC_JID"

# --- Step 6: MultiQC (waits for featureCounts) ---

MQC_JID=$(sbatch --parsable --dependency=afterany:${FC_JID} multiqc.sh)

echo "multiqc → $MQC_JID"

# Check that everything is queued

squeue -u $USER

# Steps 3-6 should show state PD with reason (Dependency)

Why copy the commands into your terminal, not a script?

The hands-on walkthrough is literally what

run_all.shdoes — but by typing each line you see each job ID appear and can inspect things in between. Once you’re comfortable, just runbash run_all.shnext time.

Same pattern for every other organism

For Drosophila, Mouse, Tomato, Mosquito, etc., copy

run_all.shinto that organism’s project directory, updatePROJECT=~/scratch/rnaseq/<ORG>, and run it. The script structure doesn’t change — only the path.

Don’t hard-code dependencies inside the

#SBATCHblockSome older examples had

#SBATCH --dependency=afterok:<PREVIOUS_JOBID(trim_ATH)>insidealign.sh. That’s fragile — you’d have to edit the file and paste the previous job’s ID every single time. Instead, pass--dependencyon thesbatchcommand line (as shown inrun_all.shabove). If you see a#SBATCH --dependency=...line inside any script, delete it.

For a full explanation of --dependency, afterok vs afterany, and the --parsable flag, see the Job dependencies section in the HPC Cluster lesson.

micromamba install -c conda-forge tree

Drosophila

Publication (Drosophila)

Not formally published — data deposited at NCBI as PRJNA770108 (Gene expression profiling of D. melanogaster larval brains after chronic alcohol exposure).

✅ Before you start the Drosophila walkthrough — pre-class checklist

- You finished the Arabidopsis section above (or at least understand

sbatch,--dependency, andrun_all.sh)micromamba activate RNASEQ_bch709works in your login shell (STAR, fastp, subread/featureCounts, multiqc all on PATH)~/scratch/rnaseq/already exists from the Arabidopsis run; we’ll addDrosophila/next toATH/- You have ~8 GB free under

~/scratch(6 PE samples × ~30 M reads + STAR index + BAMs)- You replaced

<YOUR_EMAIL>in your previous SBATCH headers — keep doing that hereAny box unchecked? → re-read the Arabidopsis run_all.sh walkthrough before continuing.

🪰 Drosophila-specific notes (vs. Arabidopsis)

- Longer introns →

--alignIntronMax 100000(Arabidopsis used 10 000). The longest D. melanogaster introns reach ~70 kb.- Smaller genome (~143 Mb) →

--genomeSAindexNbases 12(same value used for ATH; smaller-than-default 14 keeps the SA index in-RAM)- Cohort: 6 paired-end samples (~25–35 M read pairs each), 3 ethanol-treated vs 3 controls — perfect for a 2-group DESeq2 contrast

- Reference: FlyBase r6.42 (FB2021_05). Genome FASTA + GTF come straight from

ftp.flybase.net; Ensembl BDGP6.32 r104 is kept as a fallback mirror infastq-dump.shstyle.

SRA Bioproject site

https://www.ncbi.nlm.nih.gov/bioproject/PRJNA770108

Gene expression profiling of Drosophila melanogaster larval brains after chronic alcohol exposure (fruit fly)

We sequenced mRNA extracted from brains of (1) D. melanogaster larvae exposed to food containing 5% ethanol (v/v) for 6 consecutive days, and (2) age-matched untreated control larvae that grew in regular food. Differential gene expression between the two groups was calculated and reported. Each group consisted of 3 biological replicates of 30 brains each. Overall design: examination of mRNA levels in brains of D. melanogaster larvae after chronic ethanol exposure was performed using next generation sequencing (RNA-seq).

Subset of data

| Sample information | Run |

|---|---|

| Control | SRR16287545 |

| Control | SRR16287546 |

| Control | SRR16287547 |

| Ethanol treatment | SRR16287548 |

| Ethanol treatment | SRR16287549 |

| Ethanol treatment | SRR16287550 |

Project layout

mkdir -p ~/scratch/rnaseq

cd ~/scratch/rnaseq

mkdir -p Drosophila && cd Drosophila

mkdir -p raw_data trim bam reference logs qc

pwd

# example output (your <netid> will differ)

/data/gpfs/assoc/bch709-6/<netid>/scratch/rnaseq/Drosophila

samples.txt — one place to list the cohort

Every script below reads samples.txt so you only edit the cohort once. Tab-delimited: sample_name<TAB>SRR<TAB>condition. The header row exists so the awk loops can simply NR>1 to skip it.

cd ~/scratch/rnaseq/Drosophila

nano samples.txt

Paste exactly (real tab characters between columns — nano writes them literally):

sample srr condition

ctrl_rep1 SRR16287545 Control

ctrl_rep2 SRR16287546 Control

ctrl_rep3 SRR16287547 Control

etoh_rep1 SRR16287548 Ethanol

etoh_rep2 SRR16287549 Ethanol

etoh_rep3 SRR16287550 Ethanol

Verify:

cat samples.txt

awk -F'\t' 'NR>1{print $2}' samples.txt # SRR list the loops will iterate over

sample srr condition

ctrl_rep1 SRR16287545 Control

ctrl_rep2 SRR16287546 Control

ctrl_rep3 SRR16287547 Control

etoh_rep1 SRR16287548 Ethanol

etoh_rep2 SRR16287549 Ethanol

etoh_rep3 SRR16287550 Ethanol

SRR16287545

SRR16287546

SRR16287547

SRR16287548

SRR16287549

SRR16287550

fastq download (from ENA)

Same ENA-over-HTTPS approach as Arabidopsis (no broken sra-tools GLIBC dependency). The loop reads SRR IDs from samples.txt instead of being hard-coded.

cd ~/scratch/rnaseq/Drosophila

nano fastq-dump.sh

#!/bin/bash

#SBATCH --job-name=fastqdump_Drosophila

#SBATCH --cpus-per-task=2

#SBATCH --time=2-15:00:00

#SBATCH --mem=16g

#SBATCH --mail-type=all

#SBATCH --mail-user=<YOUR_EMAIL>

#SBATCH -o logs/fastq-dump_%j.out # STDOUT & STDERR

#SBATCH --account=cpu-s5-bch709-6

#SBATCH --partition=cpu-core-0

set -euo pipefail

PROJECT=~/scratch/rnaseq/Drosophila

cd "$PROJECT"

mkdir -p raw_data logs

# Drive the loop from samples.txt (column 2 = SRR; NR>1 skips the header)

SRRS=$(awk -F'\t' 'NR>1{print $2}' samples.txt)

for SRR in ${SRRS}; do

URLS=$(curl -fsSL --retry 3 --max-time 60 \

"https://www.ebi.ac.uk/ena/portal/api/filereport?accession=${SRR}&result=read_run&fields=fastq_ftp&format=tsv" \

| tail -n +2 | awk -F'\t' '{print $NF}' | tr ';' '\n' | sed '/^$/d')

[ -n "${URLS}" ] || { echo "ERROR: ENA returned no fastq URLs for ${SRR}"; exit 1; }

for U in ${URLS}; do

OUT=raw_data/$(basename "${U}")

[ -s "${OUT}" ] && { echo "[fastq] ${OUT} already present, skipping"; continue; }

echo "[fastq] ${SRR} -> https://${U}"

curl -fsSL --retry 3 --retry-delay 30 --max-time 3600 -o "${OUT}" "https://${U}"

done

done

ls -lh raw_data/*.fastq.gz

Submit & inspect:

sbatch fastq-dump.sh

# wait for completion, then:

tail -n 20 logs/fastq-dump_<jobid>.out

ls -lh raw_data/

# example tail (your numbers will differ)

[fastq] SRR16287545 -> https://ftp.sra.ebi.ac.uk/vol1/fastq/SRR162/045/SRR16287545/SRR16287545_1.fastq.gz

[fastq] SRR16287545 -> https://ftp.sra.ebi.ac.uk/vol1/fastq/SRR162/045/SRR16287545/SRR16287545_2.fastq.gz

[fastq] SRR16287546 -> https://ftp.sra.ebi.ac.uk/vol1/fastq/SRR162/046/SRR16287546/SRR16287546_1.fastq.gz

...

[fastq] SRR16287550 -> https://ftp.sra.ebi.ac.uk/vol1/fastq/SRR162/050/SRR16287550/SRR16287550_2.fastq.gz

-rw-r--r-- 1 <netid> users 1.6G ... raw_data/SRR16287545_1.fastq.gz

-rw-r--r-- 1 <netid> users 1.7G ... raw_data/SRR16287545_2.fastq.gz

-rw-r--r-- 1 <netid> users 1.5G ... raw_data/SRR16287550_2.fastq.gz

Read trimming with fastp — loop driven by samples.txt

Instead of 6 hard-coded fastp lines, loop over the SRR column of samples.txt. The behaviour is identical (same Q20, same length filter, same paired-end adapter detection), but adding/removing a sample is a one-line edit to samples.txt.

cd ~/scratch/rnaseq/Drosophila

nano trim.sh

#!/bin/bash

#SBATCH --job-name=trim_Drosophila

#SBATCH --cpus-per-task=2

#SBATCH --time=2-15:00:00

#SBATCH --mem=16g

#SBATCH --mail-type=all

#SBATCH --mail-user=<YOUR_EMAIL>

#SBATCH -o logs/trim_%j.out # STDOUT & STDERR

#SBATCH --account=cpu-s5-bch709-6

#SBATCH --partition=cpu-core-0

set -euo pipefail

PROJECT=~/scratch/rnaseq/Drosophila

cd "$PROJECT"

mkdir -p trim logs

# Loop over every SRR listed in samples.txt (skip header)

while IFS=$'\t' read -r SAMPLE SRR COND; do

echo "[trim] ${SAMPLE} (${SRR}, ${COND})"

fastp \

--in1 raw_data/${SRR}_1.fastq.gz \

--in2 raw_data/${SRR}_2.fastq.gz \

--out1 trim/${SRR}_1.trimmed.fq.gz \

--out2 trim/${SRR}_2.trimmed.fq.gz \

--detect_adapter_for_pe \

--qualified_quality_phred 20 \

--length_required 50 \

--thread 2 \

--html trim/${SRR}_fastp.html \

--json trim/${SRR}_fastp.json

done < <(awk -F'\t' 'NR>1' samples.txt)

Expected per-sample fastp summary (printed to STDERR/STDOUT for each iteration):

# example fastp summary (your numbers will differ)

Read1 before filtering:

total reads: 28,432,117

total bases: 4,264,817,550

Q20 bases: 4,164,128,001 (97.64%)

Q30 bases: 3,981,724,902 (93.36%)

Read1 after filtering:

total reads: 27,946,201

Q20 rate: 98.61%

Q30 rate: 95.04%

Filtering result:

reads passed filter: 55,612,408

reads failed due to low quality: 488,802

reads failed due to too short: 122,306

reads with adapter trimmed: 1,884,109

Duplication rate: 6.83%

JSON report: trim/SRR16287545_fastp.json

HTML report: trim/SRR16287545_fastp.html

Click to see the equivalent expanded form (one fastp call per sample) — useful for understanding what the loop unrolls to

The loop above produces exactly the same six commands as this expanded version. It is shown here because seeing the unrolled form helps connect "what the loop runs" with "what `fastp` actually executes" the first time you read the script. ```bash fastp --in1 ~/scratch/rnaseq/Drosophila/raw_data/SRR16287545_1.fastq.gz --in2 ~/scratch/rnaseq/Drosophila/raw_data/SRR16287545_2.fastq.gz --out1 trim/SRR16287545_1.trimmed.fq.gz --out2 trim/SRR16287545_2.trimmed.fq.gz --detect_adapter_for_pe --qualified_quality_phred 20 --length_required 50 --thread 2 --html trim/SRR16287545_fastp.html --json trim/SRR16287545_fastp.json fastp --in1 ~/scratch/rnaseq/Drosophila/raw_data/SRR16287546_1.fastq.gz --in2 ~/scratch/rnaseq/Drosophila/raw_data/SRR16287546_2.fastq.gz --out1 trim/SRR16287546_1.trimmed.fq.gz --out2 trim/SRR16287546_2.trimmed.fq.gz --detect_adapter_for_pe --qualified_quality_phred 20 --length_required 50 --thread 2 --html trim/SRR16287546_fastp.html --json trim/SRR16287546_fastp.json fastp --in1 ~/scratch/rnaseq/Drosophila/raw_data/SRR16287547_1.fastq.gz --in2 ~/scratch/rnaseq/Drosophila/raw_data/SRR16287547_2.fastq.gz --out1 trim/SRR16287547_1.trimmed.fq.gz --out2 trim/SRR16287547_2.trimmed.fq.gz --detect_adapter_for_pe --qualified_quality_phred 20 --length_required 50 --thread 2 --html trim/SRR16287547_fastp.html --json trim/SRR16287547_fastp.json fastp --in1 ~/scratch/rnaseq/Drosophila/raw_data/SRR16287548_1.fastq.gz --in2 ~/scratch/rnaseq/Drosophila/raw_data/SRR16287548_2.fastq.gz --out1 trim/SRR16287548_1.trimmed.fq.gz --out2 trim/SRR16287548_2.trimmed.fq.gz --detect_adapter_for_pe --qualified_quality_phred 20 --length_required 50 --thread 2 --html trim/SRR16287548_fastp.html --json trim/SRR16287548_fastp.json fastp --in1 ~/scratch/rnaseq/Drosophila/raw_data/SRR16287549_1.fastq.gz --in2 ~/scratch/rnaseq/Drosophila/raw_data/SRR16287549_2.fastq.gz --out1 trim/SRR16287549_1.trimmed.fq.gz --out2 trim/SRR16287549_2.trimmed.fq.gz --detect_adapter_for_pe --qualified_quality_phred 20 --length_required 50 --thread 2 --html trim/SRR16287549_fastp.html --json trim/SRR16287549_fastp.json fastp --in1 ~/scratch/rnaseq/Drosophila/raw_data/SRR16287550_1.fastq.gz --in2 ~/scratch/rnaseq/Drosophila/raw_data/SRR16287550_2.fastq.gz --out1 trim/SRR16287550_1.trimmed.fq.gz --out2 trim/SRR16287550_2.trimmed.fq.gz --detect_adapter_for_pe --qualified_quality_phred 20 --length_required 50 --thread 2 --html trim/SRR16287550_fastp.html --json trim/SRR16287550_fastp.json ```Reference download

cd ~/scratch/rnaseq/Drosophila/reference

# FlyBase r6.42 (FB2021_05) — pinned for reproducibility. The dmel_r6.42

# directory is still hosted by FlyBase but only via HTTPS in newer releases;

# the legacy http:// URL sometimes 301-redirects in a way that wget mishandles.

# Use HTTPS + curl with retries; add an Ensembl mirror as fallback.

FLY_FA="https://ftp.flybase.net/genomes/Drosophila_melanogaster/dmel_r6.42_FB2021_05/fasta/dmel-all-chromosome-r6.42.fasta.gz"

FLY_GTF="https://ftp.flybase.net/genomes/Drosophila_melanogaster/dmel_r6.42_FB2021_05/gtf/dmel-all-r6.42.gtf.gz"

ENS_FA="https://ftp.ensembl.org/pub/release-104/fasta/drosophila_melanogaster/dna/Drosophila_melanogaster.BDGP6.32.dna.toplevel.fa.gz"

ENS_GTF="https://ftp.ensembl.org/pub/release-104/gtf/drosophila_melanogaster/Drosophila_melanogaster.BDGP6.32.104.gtf.gz"

curl -fsSL --retry 3 --max-time 1800 -o dmel.fasta.gz "${FLY_FA}" || curl -fsSL --retry 3 --max-time 1800 -o dmel.fasta.gz "${ENS_FA}"

curl -fsSL --retry 3 --max-time 600 -o dmel.gtf.gz "${FLY_GTF}" || curl -fsSL --retry 3 --max-time 600 -o dmel.gtf.gz "${ENS_GTF}"

gunzip -f dmel.fasta.gz dmel.gtf.gz

ls -lh dmel.fasta dmel.gtf

seqkit stats dmel.fasta

# example output (your numbers will differ slightly between releases)

-rw-r--r-- 1 <netid> users 145M ... dmel.fasta

-rw-r--r-- 1 <netid> users 41M ... dmel.gtf

file format type num_seqs sum_len min_len avg_len max_len

dmel.fasta FASTA DNA 1,870 143,726,002 54 76,858.8 32,079,331

Reference index (STAR genomeGenerate)

cd ~/scratch/rnaseq/Drosophila/reference

nano index.sh

#!/bin/bash

#SBATCH --job-name=index_Drosophila

#SBATCH --cpus-per-task=12

#SBATCH --time=2-15:00:00

#SBATCH --mem=48g

#SBATCH --mail-type=all

#SBATCH --mail-user=<YOUR_EMAIL>

#SBATCH -o ../logs/index_%j.out # STDOUT & STDERR

#SBATCH --account=cpu-s5-bch709-6

#SBATCH --partition=cpu-core-0

STAR --runThreadN 12 \

--runMode genomeGenerate \

--genomeDir . \

--genomeFastaFiles dmel.fasta \

--sjdbGTFfile dmel.gtf \

--sjdbOverhang 99 \

--genomeSAindexNbases 12

Submit & monitor:

cd ~/scratch/rnaseq/Drosophila/reference

sbatch index.sh

# when done:

tail -n 20 Log.out

ls -lh SA SAindex Genome

# example STAR Log.out tail (your timestamps will differ)

Apr 28 <date> ..... started STAR run

Apr 28 <date> ... starting to generate Genome files

Apr 28 <date> ... starting to sort Suffix Array. This may take a long time...

Apr 28 <date> ... loading chunks from disk, packing SA...

Apr 28 <date> ... finished generating suffix array

Apr 28 <date> ... finished generating Suffix Array index

Apr 28 <date> ..... processing annotations GTF

Apr 28 <date> ..... inserting junctions into the genome indices

Apr 28 <date> ... writing Genome to disk ...

Apr 28 <date> ... writing Suffix Array to disk ...

Apr 28 <date> ... writing SAindex to disk

Apr 28 <date> ..... finished successfully

DONE: Genome generation, EXITING

Mapping the reads to genome index — loop driven by samples.txt

Same loop pattern as trim.sh: read SRR from samples.txt, call STAR once per sample. The Drosophila-specific flag is --alignIntronMax 100000 (introns up to 100 kb).

cd ~/scratch/rnaseq/Drosophila

nano mapping.sh

#!/bin/bash

#SBATCH --job-name=align_Drosophila

#SBATCH --cpus-per-task=8

#SBATCH --time=2-15:00:00

#SBATCH --mem=32g

#SBATCH --mail-type=all

#SBATCH --mail-user=<YOUR_EMAIL>

#SBATCH -o logs/align_%j.out # STDOUT & STDERR

#SBATCH --account=cpu-s5-bch709-6

#SBATCH --partition=cpu-core-0

# NOTE: do NOT hard-code --dependency here. Pass it on the `sbatch` command line,

# e.g. ALIGN=$(sbatch --parsable --dependency=afterok:${TRIM_JID}:${IDX_JID} mapping.sh)

set -euo pipefail

PROJECT=~/scratch/rnaseq/Drosophila

cd "$PROJECT"

mkdir -p bam logs

while IFS=$'\t' read -r SAMPLE SRR COND; do

echo "[align] ${SAMPLE} (${SRR}, ${COND})"

STAR --runMode alignReads \

--runThreadN 8 \

--readFilesCommand zcat \

--outFilterMultimapNmax 10 \

--alignIntronMin 25 \

--alignIntronMax 100000 \

--genomeDir "$PROJECT/reference/" \

--readFilesIn "$PROJECT/trim/${SRR}_1.trimmed.fq.gz" \

"$PROJECT/trim/${SRR}_2.trimmed.fq.gz" \

--outSAMtype BAM SortedByCoordinate \

--outFileNamePrefix "$PROJECT/bam/${SRR}.bam"

done < <(awk -F'\t' 'NR>1' samples.txt)

Expected Log.final.out excerpt (per sample, written to bam/<SRR>.bamLog.final.out):

# example STAR Log.final.out (your numbers will differ)

Number of input reads | 27,946,201

Average input read length | 300

UNIQUE READS:

Uniquely mapped reads number | 24,011,203

Uniquely mapped reads % | 85.92%

MULTI-MAPPING READS:

Number of reads mapped to multiple loci | 2,210,884

% of reads mapped to multiple loci | 7.91%

...

Number of splices: Total | 14,902,331

Number of splices: GT/AG | 14,752,019

% of reads unmapped: too short | 5.62%

% of reads unmapped: other | 0.45%

Click to see the equivalent expanded form (one STAR call per sample)

```bash STAR --runMode alignReads --runThreadN 8 --readFilesCommand zcat --outFilterMultimapNmax 10 --alignIntronMin 25 --alignIntronMax 100000 --genomeDir ~/scratch/rnaseq/Drosophila/reference/ --readFilesIn ~/scratch/rnaseq/Drosophila/trim/SRR16287545_1.trimmed.fq.gz ~/scratch/rnaseq/Drosophila/trim/SRR16287545_2.trimmed.fq.gz --outSAMtype BAM SortedByCoordinate --outFileNamePrefix ~/scratch/rnaseq/Drosophila/bam/SRR16287545.bam STAR --runMode alignReads --runThreadN 8 --readFilesCommand zcat --outFilterMultimapNmax 10 --alignIntronMin 25 --alignIntronMax 100000 --genomeDir ~/scratch/rnaseq/Drosophila/reference/ --readFilesIn ~/scratch/rnaseq/Drosophila/trim/SRR16287546_1.trimmed.fq.gz ~/scratch/rnaseq/Drosophila/trim/SRR16287546_2.trimmed.fq.gz --outSAMtype BAM SortedByCoordinate --outFileNamePrefix ~/scratch/rnaseq/Drosophila/bam/SRR16287546.bam STAR --runMode alignReads --runThreadN 8 --readFilesCommand zcat --outFilterMultimapNmax 10 --alignIntronMin 25 --alignIntronMax 100000 --genomeDir ~/scratch/rnaseq/Drosophila/reference/ --readFilesIn ~/scratch/rnaseq/Drosophila/trim/SRR16287547_1.trimmed.fq.gz ~/scratch/rnaseq/Drosophila/trim/SRR16287547_2.trimmed.fq.gz --outSAMtype BAM SortedByCoordinate --outFileNamePrefix ~/scratch/rnaseq/Drosophila/bam/SRR16287547.bam STAR --runMode alignReads --runThreadN 8 --readFilesCommand zcat --outFilterMultimapNmax 10 --alignIntronMin 25 --alignIntronMax 100000 --genomeDir ~/scratch/rnaseq/Drosophila/reference/ --readFilesIn ~/scratch/rnaseq/Drosophila/trim/SRR16287548_1.trimmed.fq.gz ~/scratch/rnaseq/Drosophila/trim/SRR16287548_2.trimmed.fq.gz --outSAMtype BAM SortedByCoordinate --outFileNamePrefix ~/scratch/rnaseq/Drosophila/bam/SRR16287548.bam STAR --runMode alignReads --runThreadN 8 --readFilesCommand zcat --outFilterMultimapNmax 10 --alignIntronMin 25 --alignIntronMax 100000 --genomeDir ~/scratch/rnaseq/Drosophila/reference/ --readFilesIn ~/scratch/rnaseq/Drosophila/trim/SRR16287549_1.trimmed.fq.gz ~/scratch/rnaseq/Drosophila/trim/SRR16287549_2.trimmed.fq.gz --outSAMtype BAM SortedByCoordinate --outFileNamePrefix ~/scratch/rnaseq/Drosophila/bam/SRR16287549.bam STAR --runMode alignReads --runThreadN 8 --readFilesCommand zcat --outFilterMultimapNmax 10 --alignIntronMin 25 --alignIntronMax 100000 --genomeDir ~/scratch/rnaseq/Drosophila/reference/ --readFilesIn ~/scratch/rnaseq/Drosophila/trim/SRR16287550_1.trimmed.fq.gz ~/scratch/rnaseq/Drosophila/trim/SRR16287550_2.trimmed.fq.gz --outSAMtype BAM SortedByCoordinate --outFileNamePrefix ~/scratch/rnaseq/Drosophila/bam/SRR16287550.bam ```Counting reads with featureCounts — featureCounts.sh

Once every BAM is sorted by coordinate, count read pairs against the FlyBase GTF. With subread ≥ 2.0.2, paired-end fragment counting requires both -p (paired-end) and --countReadPairs (count pairs as 1 rather than 2).

cd ~/scratch/rnaseq/Drosophila

nano featureCounts.sh

#!/bin/bash

#SBATCH --job-name=featurecounts_Drosophila

#SBATCH --cpus-per-task=8

#SBATCH --time=06:00:00

#SBATCH --mem=16g

#SBATCH --mail-type=FAIL,END

#SBATCH --mail-user=<YOUR_EMAIL>

#SBATCH -o logs/featurecounts_%j.out

#SBATCH --account=cpu-s5-bch709-6

#SBATCH --partition=cpu-core-0

set -euo pipefail

PROJECT=~/scratch/rnaseq/Drosophila

cd "$PROJECT/bam"

# Build the BAM list from samples.txt so the file order matches the cohort definition

BAMS=$(awk -F'\t' 'NR>1{printf "%s.bamAligned.sortedByCoord.out.bam ", $2}' "$PROJECT/samples.txt")

featureCounts \

-T 8 \

-p --countReadPairs \

-a "$PROJECT/reference/dmel.gtf" \

-o Drosophila.featureCount.cnt \

${BAMS}

Submit & inspect:

sbatch featureCounts.sh

# when done:

cat Drosophila.featureCount.cnt.summary

head -3 Drosophila.featureCount.cnt | cut -f1-8

# example summary (your numbers will differ)

Status SRR16287545.bam... SRR16287546.bam... SRR16287547.bam... SRR16287548.bam... SRR16287549.bam... SRR16287550.bam...

Assigned 19842310 21055812 20018736 21349204 20177102 19998841

Unassigned_NoFeatures 2381204 2412017 2354611 2466093 2390411 2331109

Unassigned_Ambiguity 901844 918310 894217 927519 908744 879618

Unassigned_MultiMapping 1882104 1922001 1880411 1933214 1900328 1855402

...

# Headers + first gene row (your formatting will differ)

Geneid Chr Start End Strand Length SRR16287545.bamAligned.sortedByCoord.out.bam SRR16287546.bam...

FBgn0031208 2L 7529 9484 + 1955 412 441

% Assigned typically lands around 78–84 % for this dataset; the dominant unassigned class is NoFeatures (intergenic) followed by MultiMapping (rRNA loci, mostly).

MultiQC summary — multiqc.sh

Same one-stop QC report idea as Arabidopsis: walk the project, parse fastp JSON / STAR Log.final.out / featureCounts .summary / FastQC, and render a single HTML.

cd ~/scratch/rnaseq/Drosophila

nano multiqc.sh

#!/bin/bash

#SBATCH --job-name=multiqc_Drosophila

#SBATCH --account=cpu-s5-bch709-6

#SBATCH --partition=cpu-core-0

#SBATCH --cpus-per-task=2

#SBATCH --mem=8g

#SBATCH --time=01:00:00

#SBATCH --mail-type=FAIL,END

#SBATCH --mail-user=<YOUR_EMAIL>

#SBATCH -o logs/multiqc_%j.out

set -euo pipefail

cd ~/scratch/rnaseq/Drosophila

mkdir -p qc

# Pull together everything multiqc can parse under the project dir

multiqc . -o qc/ -n Drosophila_report --force \

--module fastp \

--module star \

--module featureCounts

Run it (after featureCounts has finished):

sbatch multiqc.sh

tail -n 15 logs/multiqc_<jobid>.out

# example multiqc log tail (your numbers will differ)

[INFO ] multiqc : This is MultiQC v1.21

[INFO ] multiqc : Search path : /data/gpfs/assoc/bch709-6/<netid>/scratch/rnaseq/Drosophila

[INFO ] fastp : Found 6 reports

[INFO ] star : Found 6 reports

[INFO ] featureCounts : Found 1 reports

...

[INFO ] multiqc : Compressing plot data

[INFO ] multiqc : Report : qc/Drosophila_report.html

[INFO ] multiqc : Data : qc/Drosophila_report_data

[INFO ] multiqc : MultiQC complete

Copy the report to your laptop and open it in a browser:

scp <netid>@pronghorn.rc.unr.edu:~/scratch/rnaseq/Drosophila/qc/Drosophila_report.html ./

open Drosophila_report.html

Submit the entire Drosophila pipeline with one script — run_all.sh

Same DAG as the Arabidopsis pipeline; the only changes are the project path and the addition of featureCounts between align and multiqc.

Pipeline DAG:

fastq-dump ──┐

├─→ trim ─→ align ─→ featureCounts ─→ multiqc

index ─────┘

Save as run_all.sh:

#!/bin/bash

# run_all.sh — submit the entire Drosophila RNA-Seq pipeline with one command.

# Slurm enforces the correct order via --dependency; you can walk away.

set -euo pipefail

PROJECT=~/scratch/rnaseq/Drosophila

cd "$PROJECT"

mkdir -p logs qc

# Activate the env in THIS shell so every sbatch below inherits the PATH

export MAMBA_ROOT_PREFIX="${MAMBA_ROOT_PREFIX:-$HOME/micromamba}"

eval "$(micromamba shell hook --shell=bash)"

micromamba activate RNASEQ_bch709

# 1. Download FASTQs (no prerequisites)

DUMP_JID=$(sbatch --parsable fastq-dump.sh)

# 2. Build STAR index (independent of download — runs in parallel)

IDX_JID=$(cd "$PROJECT/reference" && sbatch --parsable index.sh)

# 3. Trim reads (waits for download)

TRIM_JID=$(sbatch --parsable --dependency=afterok:${DUMP_JID} trim.sh)

# 4. Align to genome (waits for BOTH trim and index)

ALIGN_JID=$(sbatch --parsable --dependency=afterok:${TRIM_JID}:${IDX_JID} mapping.sh)

# 5. Count reads (waits for align)

FC_JID=$(sbatch --parsable --dependency=afterok:${ALIGN_JID} featureCounts.sh)

# 6. MultiQC aggregation (waits for featureCounts; afterany lets it run even if FC partially failed)

MQC_JID=$(sbatch --parsable --dependency=afterany:${FC_JID} multiqc.sh)

cat <<EOF

Submitted RNA-Seq pipeline (Drosophila):

fastq-dump ${DUMP_JID}

index ${IDX_JID}

trim ${TRIM_JID}

align ${ALIGN_JID}

featurecounts ${FC_JID}

multiqc ${MQC_JID}

Monitor with: squeue -u \$USER

Cancel all: scancel ${DUMP_JID} ${IDX_JID} ${TRIM_JID} ${ALIGN_JID} ${FC_JID} ${MQC_JID}

Final report (after pipeline finishes): ~/scratch/rnaseq/Drosophila/qc/Drosophila_report.html

EOF

Run it:

chmod +x run_all.sh

bash run_all.sh

squeue -u $USER

# example output (your job IDs will differ)

Submitted RNA-Seq pipeline (Drosophila):

fastq-dump <jobid>

index <jobid>

trim <jobid>

align <jobid>

featurecounts <jobid>

multiqc <jobid>

Monitor with: squeue -u $USER

Cancel all: scancel <jobid> <jobid> <jobid> <jobid> <jobid> <jobid>

Final report (after pipeline finishes): ~/scratch/rnaseq/Drosophila/qc/Drosophila_report.html

🧑💻 Hands-on walkthrough — submit the Drosophila pipeline step-by-step

If you want to see exactly what run_all.sh does (or debug one step), submit each stage manually. Every sbatch returns a job ID that the next step depends on.

Do this first (login shell — one time):

micromamba activate RNASEQ_bch709

cd ~/scratch/rnaseq/Drosophila

mkdir -p logs qc

Then submit each step — each line is one command:

# --- Step 1: download FASTQs (no prerequisites) ---

DUMP_JID=$(sbatch --parsable fastq-dump.sh)

echo "fastq-dump -> $DUMP_JID"

# --- Step 2: build STAR index (parallel with Step 1) ---

IDX_JID=$(cd reference && sbatch --parsable index.sh)

echo "index -> $IDX_JID"

# --- Step 3: trim (waits for fastq-dump) ---

TRIM_JID=$(sbatch --parsable --dependency=afterok:${DUMP_JID} trim.sh)

echo "trim -> $TRIM_JID"

# --- Step 4: align (waits for BOTH trim and index) ---

ALIGN_JID=$(sbatch --parsable --dependency=afterok:${TRIM_JID}:${IDX_JID} mapping.sh)

echo "align -> $ALIGN_JID"

# --- Step 5: featureCounts (waits for align) ---

FC_JID=$(sbatch --parsable --dependency=afterok:${ALIGN_JID} featureCounts.sh)

echo "featurecounts -> $FC_JID"

# --- Step 6: MultiQC (waits for featureCounts) ---

MQC_JID=$(sbatch --parsable --dependency=afterany:${FC_JID} multiqc.sh)

echo "multiqc -> $MQC_JID"

# Check that everything is queued

squeue -u $USER

# Steps 3-6 should show state PD with reason (Dependency)

# example squeue (your job IDs and times will differ)

JOBID PARTITION NAME USER ST TIME NODES NODELIST(REASON)

<jobid> cpu-core-0 fastqdump_Drosophi <netid> R 0:42 1 cpu-12

<jobid> cpu-core-0 index_Drosophila <netid> R 0:42 1 cpu-13

<jobid> cpu-core-0 trim_Drosophila <netid> PD 0:00 1 (Dependency)

<jobid> cpu-core-0 align_Drosophila <netid> PD 0:00 1 (Dependency)

<jobid> cpu-core-0 featurecounts_Drosop <netid> PD 0:00 1 (Dependency)

<jobid> cpu-core-0 multiqc_Drosophila <netid> PD 0:00 1 (Dependency)

Why type each step instead of just running

run_all.sh?Both produce the same dependency chain. The hands-on walkthrough lets you see each

${JID}appear and inspect outputs/logs in between. Once you’re comfortable, just runbash run_all.shnext time.

➡️ You now have a counts matrix — head over to differential expression

bam/Drosophila.featureCount.cntis the only file the DESeq2 / EdgeR analysis needs. Continue with the Drosophila DEG subsection below the## ATH DEGwalkthrough — samesamples.txtpattern, just point--matrixatDrosophila.featureCount_count_only.cnt.

Re-running a single failed step (drop

--dependency=)When only one step failed and every upstream step is already in

COMPLETEDstate, submit just the failed script with no--dependency=flag. Therun_all.shdriver only needs--dependency=...because it submits everything at once withsbatch --parsablecapturing job IDs that don’t yet exist as completed jobs. If the upstream outputs (FASTQs inraw_data/, trimmed reads intrim/, STAR index inreference/, BAMs inbam/) are already on disk, you don’t need that wiring.Arabidopsis pipeline —

cd ~/scratch/rnaseq/ATHfirst, then run only the line for the step that failed:sbatch fastq-dump.sh # download FASTQs (cd reference && sbatch index.sh) # build STAR index sbatch trim.sh # fastp trimming sbatch align.sh # STAR alignment sbatch multiqc.sh # final aggregated reportDrosophila pipeline —

cd ~/scratch/rnaseq/Drosophilafirst, then run only the line for the step that failed:sbatch fastq-dump.sh # download FASTQs (cd reference && sbatch index.sh) # build STAR index sbatch trim.sh # fastp trimming sbatch mapping.sh # STAR alignment sbatch featureCounts.sh # gene-level read counting sbatch multiqc.sh # final aggregated reportThese pipelines are loop-driven (one script processes every sample listed in

samples.txt), not Slurm--array=jobs — so to re-run a single sample, editsamples.txt(or comment out the other lines in the script’s loop) before resubmitting. There is nosbatch --array=Nshortcut here.Sanity check before resubmitting — verify upstream outputs exist:

sacct -u $USER --format=JobID,JobName%-25,State,ExitCode --starttime today ls -lh ~/scratch/rnaseq/ATH/{raw_data,trim,bam} # Arabidopsis ls -lh ~/scratch/rnaseq/Drosophila/{raw_data,trim,bam} # DrosophilaMultiQC special case —

multiqc.shis the only step submitted with--dependency=afterany:...(notafterok) insiderun_all.sh, so the report still renders even if an upstream step ended with a non-zero warning. If you re-run MultiQC standalone (no--dependency=), keep its script unchanged — do not swapafteranyback toafterokinrun_all.sh.

Mus Musculus

Data Download

https://www.ncbi.nlm.nih.gov/bioproject/PRJNA773499

CCR2-dependent monocyte-derived cells restrict SARS-CoV-2 infection (house mouse)

SARS-CoV-2 has caused a historic pandemic of respiratory disease (COVID-19) and current evidence suggests severe disease is associated with dysregulated immunity within the respiratory tract1,2. However, the innate immune mechanisms that mediate protection during COVID-19 are not well defined. Here we characterize a mouse model of SARS-CoV-2 infection and find that early CCR2-dependent infiltration of monocytes restricts viral burden in the lung. We find that a recently developed mouse-adapted MA-SARS-CoV-2 strain, as well as the emerging B.1.351 variant, trigger an inflammatory response in the lung characterized by expression of pro-inflammatory cytokines and interferon-stimulated genes. Using intravital antibody labeling, we demonstrate that MA-SARS-CoV-2 infection leads to increases in circulating monocytes and an influx of CD45+ cells into the lung parenchyma that is dominated by monocyte-derived cells. scRNA-seq analysis of lung homogenates identified a hyper-inflammatory monocyte profile. We utilize this model to demonstrate that mechanistically, CCR2 signaling promotes infiltration of classical monocytes into the lung and expansion of monocyte-derived cells. Parenchymal monocyte-derived cells appear to play a protective role against MA-SARS-CoV-2, as mice lacking CCR2 showed higher viral loads in the lungs, increased lung viral dissemination, and elevated inflammatory cytokine responses. These studies have identified a CCR2-monocyte axis that is critical for promoting viral control and restricting inflammation within the respiratory tract during SARS-CoV-2 infection. Overall design: 8 samples in total corresponding to different mice. 4 samples are from mock, control mice. 4 samples are from SARS-CoV-2 infected mice.

mkdir -p ~/scratch/rnaseq

cd ~/scratch/rnaseq/

mkdir Mmusculus && cd Mmusculus

mkdir raw_data trim bam reference

pwd

| Run ID | LibraryName |

|---|---|

| SRR16526489 | Mock 1; Mus musculus; RNA-Seq |

| SRR16526488 | Mock 2; Mus musculus; RNA-Seq |

| SRR16526486 | Mock 3; Mus musculus; RNA-Seq |

| SRR16526483 | Mock 4; Mus musculus; RNA-Seq |

| SRR16526477 | CoV2 3; Mus musculus; RNA-Seq |

| SRR16526479 | CoV2 2; Mus musculus; RNA-Seq |

| SRR16526481 | CoV2 1; Mus musculus; RNA-Seq |

| SRR16526475 | CoV2 4; Mus musculus; RNA-Seq |

Reference download

Browse: https://www.ncbi.nlm.nih.gov/genome/?term=Mus+musculus

Download files (NCBI RefSeq GRCm39)

mkdir -p ~/scratch/rnaseq/Mmusculus/reference && cd ~/scratch/rnaseq/Mmusculus/reference

NCBI_BASE="https://ftp.ncbi.nlm.nih.gov/genomes/all/GCF/000/001/635/GCF_000001635.27_GRCm39"

curl -fsSL --retry 3 --max-time 3600 -o GRCm39_genomic.fna.gz \

"${NCBI_BASE}/GCF_000001635.27_GRCm39_genomic.fna.gz"

curl -fsSL --retry 3 --max-time 600 -o GRCm39_genomic.gff.gz \

"${NCBI_BASE}/GCF_000001635.27_GRCm39_genomic.gff.gz"

gunzip -f GRCm39_genomic.fna.gz GRCm39_genomic.gff.gz

# featureCounts expects GTF — convert with gffread (already in RNASEQ_bch709)

gffread GRCm39_genomic.gff -T -F --keep-exon-attrs -o GRCm39_genomic.gtf

ls -lh

Solanum lycopersicum

Project site

Whole genome sequencing and transcriptome sequencing of Solanum lycopersicum, M82 https://www.ncbi.nlm.nih.gov/bioproject/PRJNA753098

mkdir -p ~/scratch/rnaseq

cd ~/scratch/rnaseq/

mkdir Slycopersium && cd Slycopersium

mkdir raw_data trim bam reference

pwd

| Run ID | LibraryName |

|---|---|

| SRR15607542 | Root control Rep1 |

| SRR15607543 | Root control Rep2 |

| SRR15607544 | Root control Rep3 |

| SRR15607552 | Root Salt treatment Rep1 |

| SRR15607553 | Root Salt treatment Rep2 |

| SRR15607554 | Root Salt treatment Rep3 |

Reference Download

https://phytozome-next.jgi.doe.gov/info/Slycopersicum_ITAG4_0

Download files

Slycopersicum_691_ITAG4.0.gene.gff3.gz

Slycopersicum_691_SL4.0.fa.gz

Mosquito (Anopheles stephensi)

RNAseq from adult male and female Anopheles stephensi https://www.ncbi.nlm.nih.gov/bioproject/PRJNA277477

Folder preparation

mkdir -p ~/scratch/rnaseq

cd ~/scratch/rnaseq/

mkdir Astephensi && cd Astephensi

mkdir raw_data trim bam reference

pwd

SRA read download

| Run ID | LibraryName |

|---|---|

| SRR1851022 | Anopheles stephensi male RNAseq replicate 1 |

| SRR1851024 | Anopheles stephensi male RNAseq replicate 2 |

| SRR1851026 | Anopheles stephensi male RNAseq replicate 3 |

| SRR1851027 | Anopheles stephensi female RNAseq replicate 1 |

| SRR1851028 | Anopheles stephensi female RNAseq replicate 2 |

| SRR1851030 | Anopheles stephensi female RNAseq replicate 3 |

Reference genome (VectorBase)

Browse: https://vectorbase.org/vectorbase/app/record/dataset/TMPTX_asteIndian

Reference download

mkdir -p ~/scratch/rnaseq/Astephensi/reference && cd ~/scratch/rnaseq/Astephensi/reference

VB_FA="https://vectorbase.org/common/downloads/release-68/AstephensiSDA-500/fasta/data/VectorBase-68_AstephensiSDA-500_Genome.fasta"

VB_GFF="https://vectorbase.org/common/downloads/release-68/AstephensiSDA-500/gff/data/VectorBase-68_AstephensiSDA-500.gff"

curl -fsSL --retry 3 --max-time 1800 -o AstephensiSDA-500.fasta "${VB_FA}"

curl -fsSL --retry 3 --max-time 600 -o AstephensiSDA-500.gff "${VB_GFF}"

ls -lh

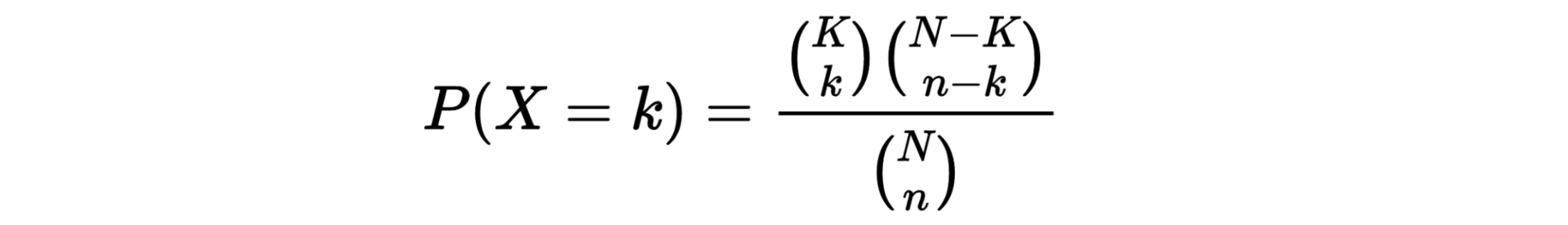

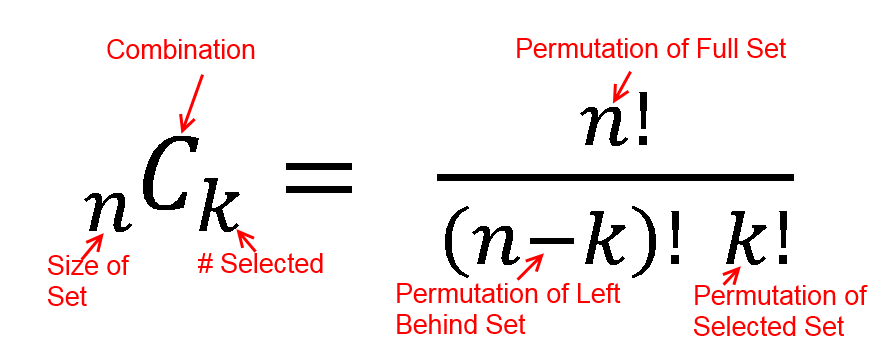

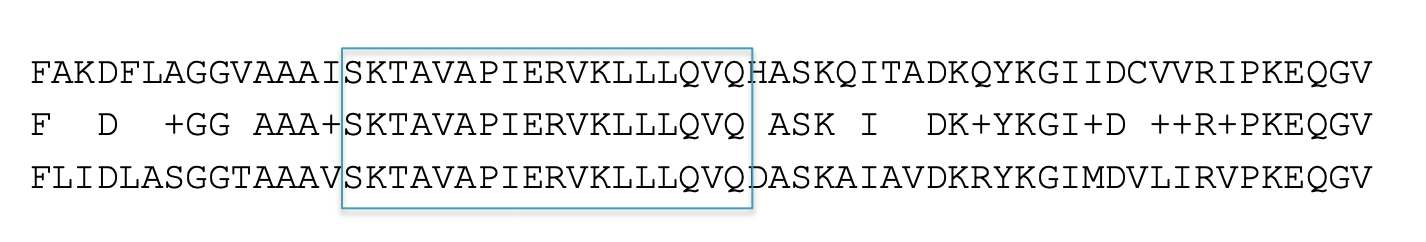

Expression values and Normalization

CPM, RPKM, FPKM, TPM, RLE, MRN, Q, UQ, TMM, VST, RLOG, VOOM … Too many…

CPM: Controls for sequencing depth when dividing by total count. Not for within-sample comparison or DE.

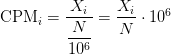

Counts per million (CPM) mapped reads are counts scaled by the number of fragments you sequenced (N) times one million. This unit is related to the FPKM without length normalization and a factor of 10^6:

RPKM/FPKM: Controls for sequencing depth and gene length. Good for technical replicates, not good for sample-sample due to compositional bias. Assumes total RNA output is same in all samples. Not for DE.

TPM: Similar to RPKM/FPKM. Corrects for sequencing depth and gene length. Also comparable between samples but no correction for compositional bias.

TMM/RLE/MRN: Improved assumption: The output between samples for a core set only of genes is similar. Corrects for compositional bias. Used for DE. RLE and MRN are very similar and correlates well with sequencing depth. edgeR::calcNormFactors() implements TMM, TMMwzp, RLE & UQ. DESeq2::estimateSizeFactors implements median ratio method (RLE). Does not correct for gene length.

VST/RLOG/VOOM: Variance is stabilised across the range of mean values. For use in exploratory analyses. Not for DE. vst() and rlog() functions from DESeq2. voom() function from Limma converts data to normal distribution.

geTMM: Gene length corrected TMM.

For DEG using DEG R packages (DESeq2, edgeR, Limma etc), use raw counts

For visualisation (PCA, clustering, heatmaps etc), use TPM or TMM

For own analysis with gene length correction, use TPM (maybe geTMM?)

Other solutions: spike-ins/house-keeping genes

Featurecount

featureCounts -p -a <GENOME>.gtf <SAMPLE1>.bam <SAMPLE2>.bam <SAMPLE3>.bam ...... -o counts.txt

micromamba activate RNASEQ_bch709

cd ~/scratch/rnaseq/ATH/bam

featureCounts -o ATH.featureCount.cnt -p --countReadPairs -a ~/scratch/rnaseq/ATH/reference/TAIR10_GFF3_genes.gtf SRR1761506.bamAligned.sortedByCoord.out.bam SRR1761509.bamAligned.sortedByCoord.out.bam SRR1761507.bamAligned.sortedByCoord.out.bam SRR1761510.bamAligned.sortedByCoord.out.bam SRR1761508.bamAligned.sortedByCoord.out.bam SRR1761511.bamAligned.sortedByCoord.out.bam

micromamba activate RNASEQ_bch709

cd ~/scratch/rnaseq/Mmusculus/bam

featureCounts -o Mmusculus.featureCount.cnt -p --countReadPairs -a ~/scratch/rnaseq/Mmusculus/reference/GRCm39_genomic.gtf -g "gene_name" <YOUR BAM FILES>

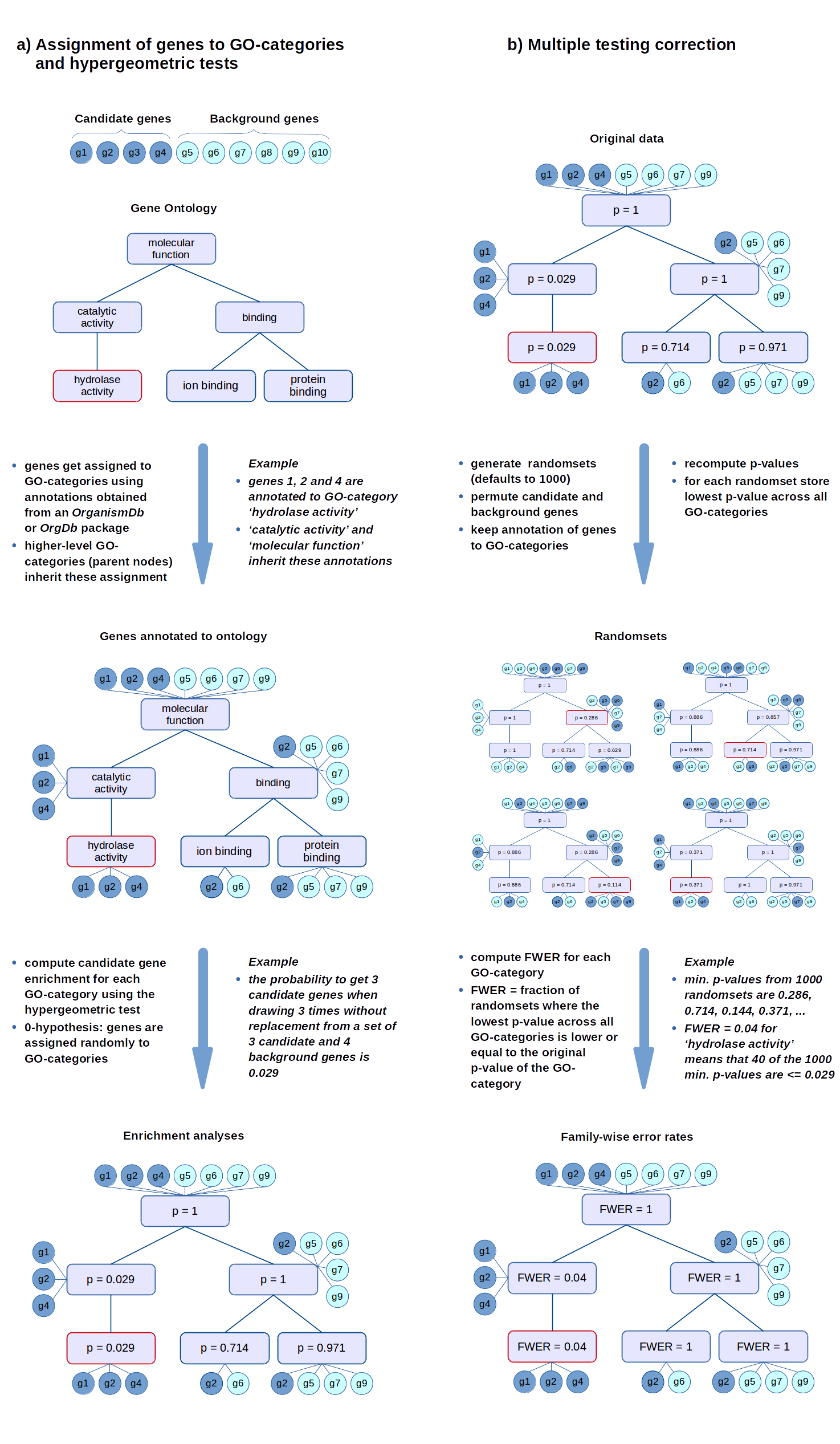

FPKM

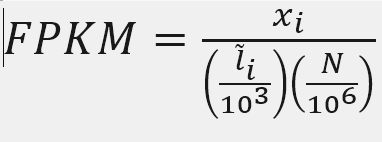

X = mapped reads count N = number of reads L = Length of transcripts

‘length’ is this transcript’s sequence length (poly(A) tail is not counted). ‘effective_length’ counts only the positions that can generate a valid fragment.

FPKM

Fragments per Kilobase of transcript per million mapped reads

X = 3752

Number_Reads_mapped = 559192

Length = 651.04

fpkm= X*(1000/Length)*(1000000/Number_Reads_mapped)

fpkm

ten to the ninth power = 10**9

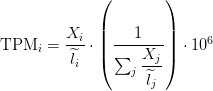

TPM

Transcripts Per Million

Paper read

FPKM

Fragments per Kilobase of transcript per million mapped reads

awk 'FNR > 2 { sum+=$7 } END {print sum}' ATH.featureCount.cnt

Example AT1G01060.TAIR10

Length = 976

X = 500

Number_Reads_mapped = 5949384

fpkm= X*(1000/Length)*(1000000/Number_Reads_mapped)

fpkm

ten to the ninth power = 10**9

fpkm=X/(Number_Reads_mapped*Length)*10**9

fpkm

TPM

sum_count_per_length

awk 'FNR > 2 { sum+=$7/$6 } END {print sum}' ATH.featureCount.cnt

egrep AT1G01060 ATH.featureCount.cnt

TPM calculation from reads count

sum_count_per_length = 4747.27

X = 500

Length = 976

TPM = (X/Length)*(1/sum_count_per_length )*10**6

TPM

TPM and FPKM calculation